In Today’s Issue:

🎓 Elite university students are secretly feeding seminar questions into AI chatbots

⚡ Nearly half of planned U.S. AI data center projects are being delayed or canceled

🧬 Anthropic drops $400 million to acquire Coefficient Bio

⚠️ Iran threatens to destroy the massive $30 billion Stargate AI data center in Abu Dhabi

💼 Sam Altman is pushing for a rapid 2026 OpenAI IPO

✨ And more AI goodness…

Dear Readers,

Twenty students walk into a Yale seminar, and for the first time in academic history, they all have the same answer, because they're all secretly asking the same chatbot. Today's featured story digs into how AI is flattening independent thought in classrooms across elite universities, and why that should worry everyone, not just professors.

But the homogenization of ideas is just one thread in a much bigger picture: the AI boom itself is running into hard physical limits, with nearly half of planned U.S. data center builds delayed or canceled because there simply isn't enough power infrastructure to keep up. Anthropic, meanwhile, is making a $400 million bet that AI's next frontier is biotech. Iran is threatening to destroy the $30 billion Stargate facility in Abu Dhabi, dragging AI infrastructure straight into geopolitical crosshairs. And inside OpenAI, Sam Altman is racing toward an IPO while his own CFO quietly pumps the brakes.

From classrooms to boardrooms to conflicts, AI is reshaping the rules faster than anyone can write them, so let's dig in.

Disclaimer

Our coverage of AI developments in the context of the Iran conflict is intended to be strictly informational and analytical. We do not seek to take sides, promote any political agenda, or advocate for any party involved.

Our goal is to present verified information, relevant context, and technological implications as accurately and objectively as possible. Where analysis is included, it is clearly separated from factual reporting.

We remain committed to fairness, balance, and independence in our reporting, especially in complex and sensitive geopolitical situations.

All the best,

Kim Isenberg

⚡ AI boom hits power limits

Not looking good: Despite massive spending by Alphabet, Amazon, Meta, and Microsoft, nearly half of U.S. AI data center projects are being delayed or canceled due to shortages in critical power infrastructure like transformers and batteries. Lead times have stretched from ~2 years to up to 5 years, while only one-third of expected 12 GW capacity for 2026 is actually under construction.

Eeven trillions in AI investment can’t scale without electricity, forcing continued reliance on imports from China and exposing major supply chain vulnerabilities that could slow the entire AI revolution. Energy is and will be the biggest bottleneck for AI.

🚀 Anthropic Expands Into AI Biotech

Anthropic just made a bold $400M move, acquiring Coefficient Bio to supercharge its push into healthcare and life sciences. The tiny (<10 people) startup brings powerful AI tools for drug discovery, clinical strategy, and R&D, now folded into Anthropic’s growing biotech stack alongside partners like Sanofi and Novo Nordisk.

AI companies aren’t just building models, they’re vertically integrating into industries like biotech, aiming to automate everything from research planning to regulatory workflows, unlocking faster and cheaper innovation. And if you have read Dario's blog carefully, you also know that biology plays a significant role in "Machines of Loving Grace".

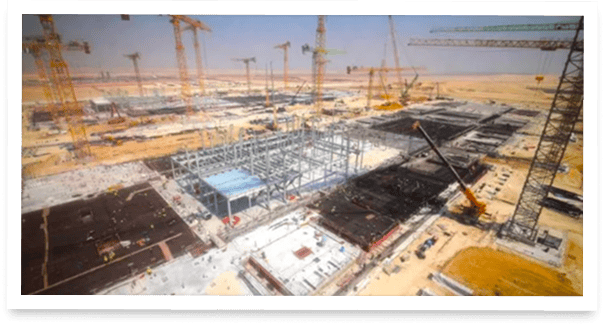

⚠️ Iran Targets OpenAI’s StarGate

Iran has threatened to destroy the $30B Stargate AI data center in Abu Dhabi, signaling a sharp escalation from traditional military conflict into tech infrastructure warfare. Backed by major global firms and designed as a 5GW AI powerhouse, the project represents critical digital infrastructure,making it a high-stakes geopolitical target with potential ripple effects across global tech and economies. The current war demonstrates the vulnerability of the tech industry.

Google DeepMind shows that AI agents are already being systematically manipulated through hidden, human-invisible attack vectors embedded in web content, images, and documents.

Current defenses fail to detect or prevent these attacks, creating a large, largely invisible security risk across agentic systems.

Superintelligence exclusive interview with Magic Patterns CEO Alexander Danilowicz

Everyone Sounds the Same Now

The Takeaway

👉 Students at elite universities are using AI chatbots in real time during seminars, feeding professors' questions into models and presenting the output as their own analysis, leading to increasingly uniform classroom discussions.

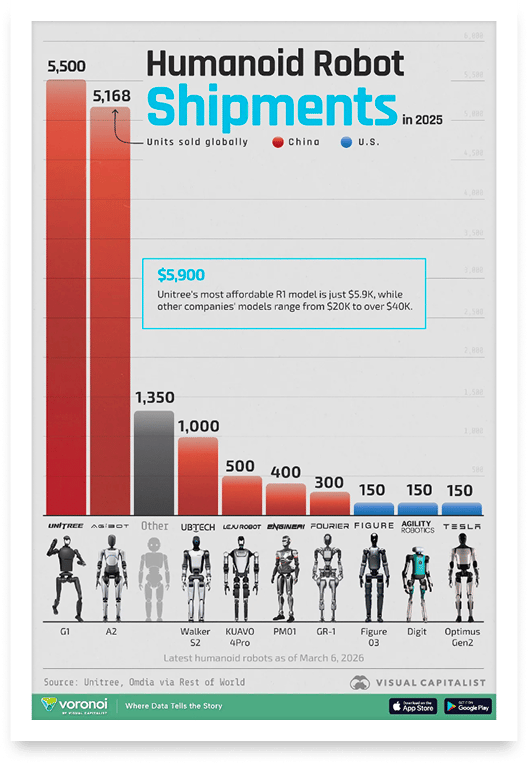

👉 Research published in Trends in Cognitive Sciences shows that large language models systematically homogenize human expression across language, perspective, and reasoning, compounding over time as AI-generated text feeds back into training data.

👉 Professors are responding by shifting to handwritten in-class essays, oral exams, and pop quizzes, essentially returning to analog methods because AI detection tools remain unreliable.

👉 The deeper risk is developmental: students who never practice independent reasoning during their formative years may graduate without the cognitive skills needed for creative problem-solving, critical analysis, or challenging mainstream ideas.

College classrooms used to be places where 20 students brought 20 different perspectives. Now they're bringing the same one. A CNN investigation at Yale University reveals a growing trend: students are secretly feeding professors' questions into chatbots during seminars, then parroting the output as their own thoughts. The result? Discussions that sound eerily uniform.

A paper published in March in Trends in Cognitive Sciences confirms the pattern, finding that large language models systematically flatten human expression across three dimensions: language, perspective, and reasoning. An MIT Media Lab experiment added another alarming data point: students who used ChatGPT for essay writing showed measurably less brain activity than those who didn't. Professors are now fighting back with handwritten in-class essays, oral exams, and pop quizzes designed to catch students who outsourced their reading to a chatbot. The real concern isn't just cheating. It's that an entire generation may never develop the cognitive muscles needed to think independently.

As one Yale student put it, she'd rather tell her professor she doesn't understand than let a machine fake understanding for her. That takes guts, and it's exactly the kind of thinking no model can replicate. This study makes one thing very clear: education and learning need to be completely rethought. Education as we have practiced it so far is no longer up-to-date, because one thing is certain: AI is here to stay.

Why it matters: If students outsource reasoning to AI during the years when critical thinking skills are actively forming, the long-term consequences for innovation, independent thought, and democratic discourse could be severe. This isn't just an education problem, it's a society-wide challenge with implications for every industry that depends on original thinking. We need to rethink education.

Sources:

🔗 https://edition.cnn.com/2026/04/04/health/ai-impact-college-student-thinking-wellness

🔗 https://www.cell.com/trends/cognitive-sciences/abstract/S1364-6613(26)00003-3

Speak naturally. Send without fixing.

Wispr Flow turns your voice into clean, professional text you can send the moment you stop talking. Not rough transcription you have to clean up. Actual polished text - ready for email, Slack, or any app.

Reid Hoffman sends 89% of his messages with zero edits using Flow. Millions of people worldwide have made it part of how they work, including teams at OpenAI, Vercel, and Clay.

Speak the way you think. Go on tangents. Change your mind mid-sentence. Flow strips the filler, fixes the grammar, and gives you text that reads like you spent five minutes writing it.

Works on Mac, Windows, iPhone, and now Android - free and unlimited on Android during launch.

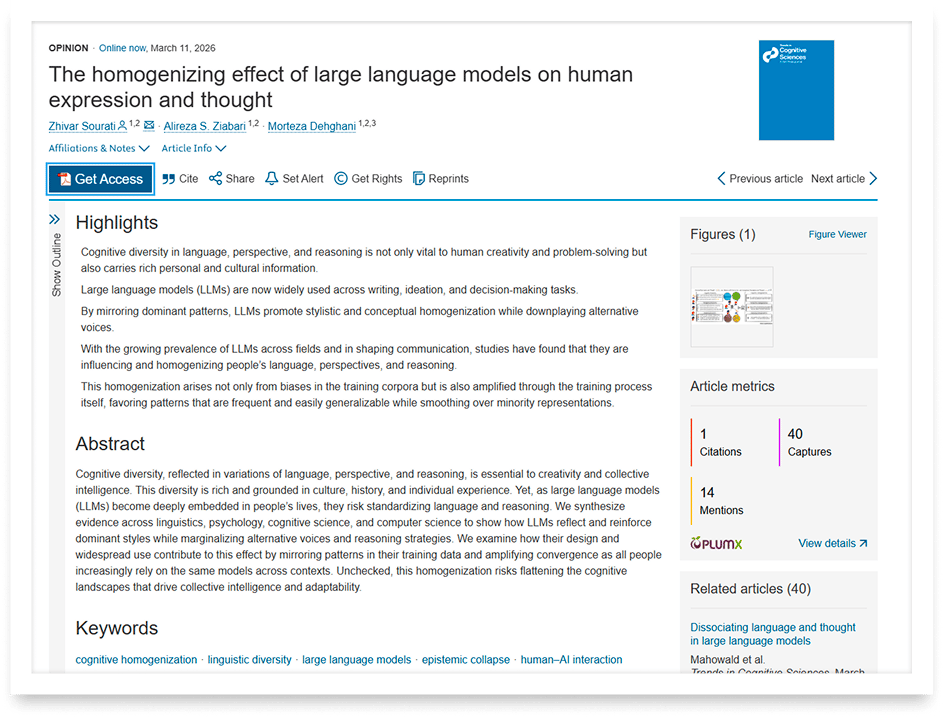

The Chinese company Unitree sells by far the most humanoid robots. It will be interesting to see if Tesla and Figure follow suit soon.

OpenAI's IPO Power Struggle

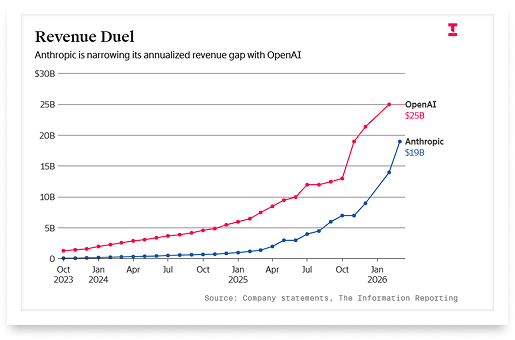

Sam Altman wants to take OpenAI public as early as Q4 2026. His own CFO isn't so sure that's a good idea. According to reporting by The Information, Sarah Friar has privately told colleagues she doesn't believe the company will be ready for an IPO this year, pointing to massive spending commitments, slowing revenue growth, and a mountain of organizational work still ahead.

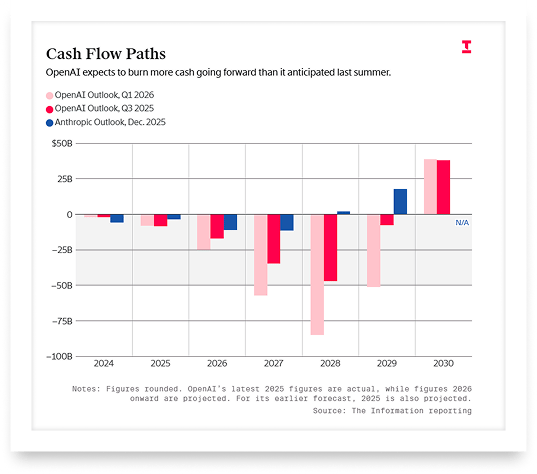

The tension is real: Friar was reportedly excluded from key financial discussions, including meetings with major investors about server procurement. In an unusual structural shift, she no longer reports directly to Altman but instead to Fidji Simo, the head of applications. Meanwhile, OpenAI has committed over $600 billion in cloud spending over the next five years and warned investors that its cash burn through 2030 will be more than double previous forecasts, potentially exceeding $200 billion.

Adding fuel to the urgency: Anthropic is in parallel discussions about going public as early as Q4 2026, and Altman reportedly wants to beat them to it. Both executives issued a joint statement insisting they are aligned, but the cracks are hard to ignore. Can the most capital-intensive company in tech history sprint to Wall Street while its own finance chief is pumping the brakes?

Are You Ready to Actually Retire?

Knowing when to retire means knowing what it costs, how long your money needs to last, and where the income comes from. When to Retire: A Quick and Easy Planning Guide helps investors with $1,000,000 or more work through all of it.