Dear Readers,

A note from us: University students receive our Saturday Deepdive for free when they register with their university email address at: https://getsuperintel.com/plus-whitelist

Students save $100 per year!

All the best,

Kim Isenberg

Trustworthy AI Is Really About Delegated Agency

The Wrong Mental Model

Most discussion of trustworthy AI still begins from the wrong premise. We talk as if AI were simply another kind of software: a tool that produces outputs, supports human work, and can be judged by criteria such as accuracy, robustness, fairness, and compliance. Those things matter, but they no longer get to the heart of the issue. The systems now entering organizations do more than generate answers. They observe, remember, recommend, initiate, and sometimes act. Even when a human remains formally in charge, the system may still shape what that human sees, what feels worth considering, and what happens next. The old language of software quality is starting to feel too thin for what is actually being deployed.

That is why the central question is no longer just whether an AI system works. It is what sort of power it has been given. Who or what can it see? What does it remember? What can it set in motion? How far do its judgments travel? Trustworthy AI, in other words, is not mainly about software in the familiar sense. It is about delegated agency.

That may sound abstract, but it names something concrete. Organizations are not merely buying tools. They are handing over pieces of perception, analysis, judgment, and action to systems that do not think or err in human ways, yet can still have large human consequences. The issue is not whether the machine has intentions. The issue is that it has reach. Once a system has reach, governance becomes a question of authority, not just performance.

GTM Atlas, by Attio

Your GTM motion is creative. The thinking behind it should be too.

GTM Atlas is the ultimate resource on AI GTM for early-stage builders, providing foundational knowledge for teams navigating growth from scratch. Curated by Attio, the AI CRM, Atlas gives you:

Systems thinking for every stage of the customer journey

Frameworks and templates that scale with you

Conversations with GTM operators at Clay, Lovable, and Vercel.

Mapped by operators. Curated by Attio.

The Cognitive Light Cone

A useful way to think about this comes from the biologist Michael Levin and his notion of the cognitive light cone. In physics, a light cone marks the boundary of what can be affected across space and time. Levin adapts the metaphor to cognition. The question becomes: within what horizon can an agent sense the world, hold information, model possibilities, and influence outcomes? A bacterium has a tiny horizon. A dog moves through a larger one, with memory, anticipation, and a wider behavioural repertoire. Human beings operate on a broader scale still. We plan for distant futures, act through institutions, and produce consequences far from the moment in which decisions are made.

The point is not simply that some agents are smarter than others. It is that they differ in the scope of consequences they can produce. That is what makes the idea useful for AI. Once an AI system is connected to company data, equipped with memory, linked to business systems, granted access to tools, and woven into workflows, its effective horizon widens. We are not just improving a model. We are increasing its jurisdiction.

Thinking in terms of a cognitive light cone helps us see that what matters is not only what the model can do in isolation, but how far its judgments, recommendations, and actions can travel into the surrounding organization. A system with a narrow cone may be little more than a bounded assistant. A system with a wide cone may influence decisions, trigger actions, and shape outcomes across whole workflows. That is when governance becomes less about software behaviour in the abstract and more about delegated authority.

Competence Plus Reach

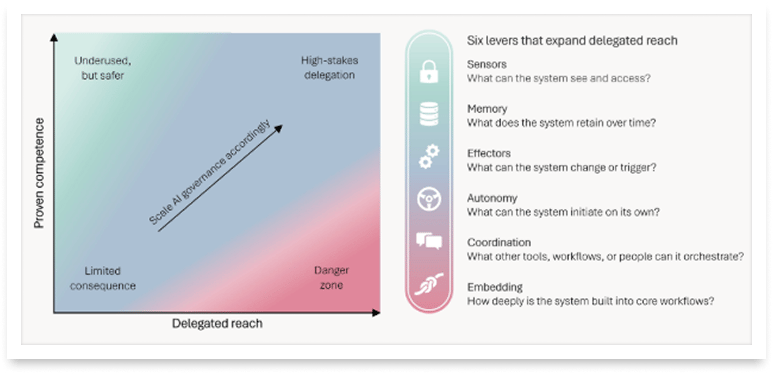

That word, jurisdiction, matters. Much of the confusion around AI governance comes from treating intelligence as the only thing worth measuring. But intelligence on its own tells you surprisingly little. A highly capable model with no access, no memory, and no ability to act may be of limited consequence. A less capable one, given broad access and allowed to operate across critical workflows, may become far more dangerous. What matters is the relationship between competence and reach.

This is the core point. Risk does not arise from model capability alone. It arises from the interaction between capability and delegated reach. Governance begins to fail when the second outruns the first. That is a more useful way to think about trustworthy AI than judging systems mainly by model quality in isolation.

It also explains why debates about AI governance often feel muddled. One person is thinking about model intelligence. Another is thinking about the authority the system has been given. Both matter, but the second is too often left implicit. A practical governance framework has to make it explicit.

How Leaders Miss the Shift

This is also why the problem can creep up on leaders. Delegated agency does not always arrive with theatrical flourish. It does not necessarily announce itself as a fully autonomous digital employee. More often it appears first as a digital assistant, tucked inside familiar tools and working through an employee’s existing identity and permissions. That can feel reassuring. Nothing much seems to have changed. Yet something important has changed. The actor inside the workflow is no longer just the employee.

An assistant embedded across email, documents, meetings, and chat does not need formal authority to alter how work is done. Give it a little time and people may stop writing the first draft themselves. They may stop reading the whole thread. They may stop making the first pass through a document or testing their own initial judgment before seeing the system’s version. None of this looks dramatic. There is no obvious moment at which the organization declares that authority has been delegated. But delegation is happening all the same, through habit, speed, convenience, and the quiet transfer of cognitive labour.

That is one reason so-called copilots deserve more scrutiny than their branding suggests. They can expand practical influence long before anyone admits that the sphere of agency has widened. What appears to be a simple productivity layer may, in practice, become an important site of delegated judgment.

The Six Levers of Delegated Agency

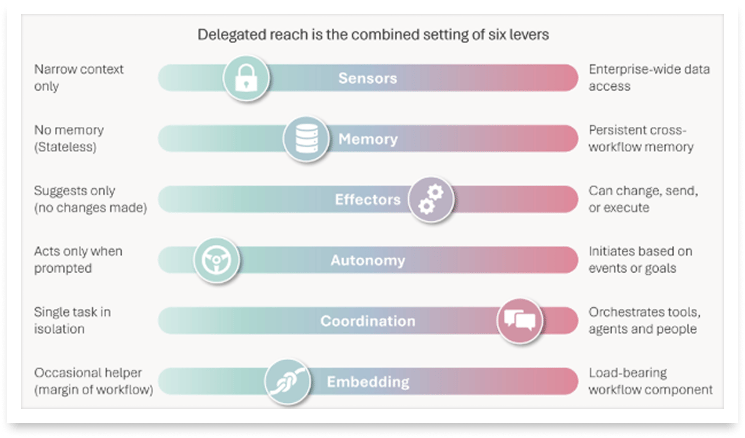

Once you see the issue in those terms, a more useful governance vocabulary comes into view. The widening of delegated agency happens through six practical levers: Sensors, Memory, Effectors, Autonomy, Coordination, and Embedding. Together, these levers determine how wide the system’s sphere of influence has become.

Sensors are what the system can see. Access is never just a technical detail. It defines the informational world within which the system operates. There is a major difference between an AI that sees one uploaded file and one that can range across contracts, emails, spreadsheets, calendars, policies, and transaction records. Yet organizations often blur that difference in practice, especially early on, when convenience takes precedence over discipline. Later, the access remains.

Memory is what the system remembers. Stateless systems are one thing. Systems that accumulate context over time are another. Memory is useful, but it also changes the relationship. A system that remembers past interactions, preferences, exceptions, names, roles, and judgments is no longer just responding in the moment. It is building continuity. Once memory enters the picture, so do questions of retention, correction, deletion, inspection, and reset.

Effectors are what the system can change. The distance between drafting and sending, suggesting and posting, flagging and freezing, is the distance between advice and consequence. Many organizations speak loosely about AI “supporting decisions” when what they really mean is that the system is entering the chain by which outcomes are produced. At that point, the old disciplines of control begin to matter again: approvals, reversibility, segregation of duties, and accountability for action. An AI system with broad effectors is not merely offering assistance. It is participating in operations.

Autonomy is whether the system waits for a prompt or can initiate action on its own. A system that waits to be used is one thing. A system that monitors conditions, decides when something needs attention, and starts acting without being asked is another. The risk is not only that it may be wrong. It is that the scale and tempo of error change. Mistakes can repeat automatically. Weak judgments can propagate faster than ordinary supervision can catch them. The question is not just whether the output looks sensible, but when the system is allowed to begin and under what conditions.

Coordination is what the system can orchestrate. A model that writes text is one thing. A model that can call tools, trigger workflows, pull records, contact people, or direct other systems is operating on a different plane. The importance lies in the chain. Small acts of orchestration can add up to substantial consequences, especially when no single human being sees the whole sequence clearly enough to own it. At that point, traceability becomes critical, along with permissions, logging, checkpoints, and a clear line of accountability.

Embedding is how load-bearing the system has become. A lightly used assistant is one thing. A system embedded so deeply in a workflow that people no longer know how to proceed without it is another. Embedding changes the character of risk because dependence changes the character of failure. If there is no tested manual fallback, if timelines assume the system will function, or if staff no longer understand the process apart from the system’s participation, then the organization has made the AI part of its operating infrastructure whether or not anyone has said so explicitly.

These six levers are not minor implementation details. Sensors, Memory, Effectors, Autonomy, Coordination, and Embedding together determine how much of the organization’s perception, continuity, action, initiation, orchestration, and resilience now runs through the system. A great deal of current AI governance still focuses on the model alone. It asks whether the model is accurate enough, safe enough, fair enough, or explainable enough. Those are real questions. But on their own they do not tell us how much power has actually been handed over.

A simple analogy helps. Imagine two people of equal raw intelligence. One has little money, little access, no title, and no institutional support. The other runs a state. Their minds may be no different in principle, yet the scale of their consequences is radically different. The difference is not intelligence. It is jurisdiction. AI systems are much the same. The same model can be deployed in a narrow, bounded role or in one with far wider authority. To ask only how clever the model is misses the point.

The Governance Implication

Human institutions have always struggled with the danger that power can outrun judgment. Someone is promoted too early. Access is granted too broadly. A role acquires responsibilities before the controls around it are mature. What follows is not always catastrophe. Often it is something quieter: overconfidence, hidden fragility, errors that compound because too much was assumed too soon. AI systems make the same structural mistake available in a new register. A model may sound convincing before it is reliable. A pilot may look impressive before anyone has seen how the system behaves at scale. The widening of agency often happens faster than the widening of governance.

So the task is not merely to test models and hope for the best. It is to calibrate delegated power. Strong systems in well-controlled domains may deserve broader reach. Weak or poorly understood systems do not. Access should be earned. Autonomy should be bounded. Action should be matched by oversight. The deeper the memory, the wider the access, the stronger the effectors, the greater the coordination, and the more load-bearing the role, the more serious governance needs to become. Not because every AI system is dangerous, but because every meaningful delegation of agency deserves to be visible, proportionate, and answerable.

That, in the end, is why trustworthy AI is really about delegated agency. The deepest challenge is not to make software that behaves well in isolation. It is to govern non-human actors inside human institutions. Once that becomes the centre of the conversation, a great deal of today’s fog begins to lift. We can stop speaking in slogans and start asking the harder, more useful question: what power has this system actually been given?

Claude is not just a chatbot anymore. Is your security team ready?

Claude.ai is one thing. Claude Cowork with MCP connections, running agentic workflows, taking actions across your data with ungoverned skills? That is a different conversation entirely, and most security teams are not equipped to govern it.

Harmonic Security is built to secure everything Claude offers. Full browser controls for Claude.ai, deep governance over agentic MCP workflows, and real-time visibility into what Claude is doing across your organization. So your CISO can say yes to the tools your business is already demanding.

This article argues that trustworthy AI is best understood not primarily as a software quality problem, but as a governance problem of delegated agency. As AI systems move beyond generating outputs and begin to observe, remember, recommend, initiate, coordinate, and act within organizational workflows, the key question shifts. It is no longer enough to ask whether a model is accurate, robust, fair, or compliant in isolation. The more important question is what sort of authority the system has effectively been given.

Drawing on Michael Levin’s idea of the cognitive light cone, the article proposes that AI systems should be understood in terms of the scope of consequences they can produce, not just their intelligence in the abstract. The central insight is that risk arises from the interaction between competence and reach. A highly capable model with limited access and no operational role may be low consequence, while a less capable system embedded in critical workflows may be far more dangerous.

To make this practical, the article identifies six levers through which delegated agency expands: Sensors, Memory, Effectors, Autonomy, Coordination, and Embedding. Together, these levers determine how wide the system’s sphere of influence has become, and therefore how demanding governance needs to be.

Trustworthy AI is best understood as a question of delegated agency. Once AI systems can see, remember, initiate, affect, coordinate, and become embedded in real workflows, the core governance issue is no longer model quality alone, but the scope of authority the system has effectively been given. The practical implication is simple: governance should scale with delegated power. AI systems with narrow reach can be treated as bounded assistants. Systems with broader reach, stronger effectors, greater autonomy, and deeper embedding require correspondingly stronger oversight, controls, and accountability. The goal is not to govern all AI in the same way, but to make delegated agency visible and govern it in proportion to its reach.

Martin Fjeldbonde

Martin writes and speaks about trustworthy AI, delegated agency, and how increasingly agentic systems are changing professional judgment, institutional oversight, and the operating model of audit. His work focuses on what AI means for assurance, accountability, and trust in organizations.

Within Deloitte, he works on building the future of audit in the age of AI, with a particular interest in governance, operating model redesign, and the practical conditions for deploying AI responsibly inside companies.

Martin Fjeldbonde is a Partner at Deloitte, where he works on AI advisory, AI governance, and the future of Audit across EMEA.

Sources:

🔗 Michael Levin, The Computational Boundary of a “Self”: Developmental Bioelectricity Drives Multicellularity and Scale-Free Cognition

https://www.frontiersin.org/journals/psychology/articles/10.3389/fpsyg.2019.02688/full

🔗 Michael Levin, Technological Approach to Mind Everywhere (TAME): an experimentally-grounded framework for understanding diverse bodies and minds

https://doi.org/10.48550/arXiv.2201.10346