In Today’s Issue:

🛑 Anthropic is scrapping its original 2023 safety pledge in favor of rolling risk reports

💻 Nvidia just unveiled Vera Rubin, a liquid-cooled beast of a system that delivers 10x the performance per watt

💰 Amazon is negotiating a jaw-dropping $50 billion investment in OpenAI

🪖 Anthropic faces a critical Friday deadline to either drop its safety red lines for the Pentagon or risk being blacklisted like a foreign adversary

✨ And more AI goodness…

Dear Readers,

Tomorrow at 5:01 p.m., the most consequential standoff between Silicon Valley and the Pentagon reaches its breaking point: Anthropic either bends its safety red lines for military use or faces a designation normally reserved for foreign adversaries like Huawei.

That alone would make today's issue essential reading, but we're just getting started. Anthropic is also quietly dismantling its original safety pledge from 2023, trading hard stop thresholds for rolling transparency reports as competition and a $380 billion valuation rewrite the rules of responsible scaling.

Meanwhile, Nvidia just pulled the curtain back on Vera Rubin, a nearly two-ton, liquid-cooled beast that delivers 10x the performance per watt of its predecessor, efficiency that could reshape the economics of every AI data center on the planet. And then there's Amazon, negotiating a jaw-dropping $50 billion bet on OpenAI that hinges on either an IPO or the arrival of AGI itself.

From military brinkmanship to infrastructure arms races to bets on artificial general intelligence, today's issue maps the fault lines where power, money, and principles collide. Dig in.

All the best,

⚠️ Anthropic Relaxes Core AI Safeguard

Anthropic has scrapped its 2023 pledge to halt AI training unless safety protections were guaranteed in advance, marking a major shift in its Responsible Scaling Policy. Executives say fierce competition, a lack of global regulation, and murky risk science made the original “red line” approach unrealistic, especially as the company’s valuation surged to $380 billion and revenue grew 10x annually.

Instead of hard stop thresholds, Anthropic will now publish Frontier Safety Roadmaps and Risk Reports every 3 to 6 months, promising transparency and safety parity (or better) versus rivals. The move signals a broader industry reality: AI capabilities are accelerating faster than governance, and companies are adapting their guardrails to stay in the race.

🚀 Nvidia Unveils Ultra-Efficient AI

NVIDIA revealed its next-gen AI system to CNBC, Vera Rubin, boasting 10x more performance per watt than its Grace Blackwell predecessor - a massive leap as energy efficiency becomes AI’s biggest bottleneck. Built from 1.3 million components, powered by 72 Rubin GPUs and 36 Vera CPUs, and fully liquid-cooled, the nearly 2-ton rack system is expected to cost $3.5–$4 million and ship in the second half of 2026.

With customers like Meta, Amazon, Google, Microsoft, OpenAI, and Anthropic lining up, and competition heating up from Advanced Micro Devices, Nvidia is doubling down on AI dominance while planning up to $500 billion in U.S. AI infrastructure by 2029. The message is clear: efficiency equals ROI, and Nvidia wants to own the next chapter of AI scale.

🤖 Amazon’s $50B AGI or IPO Gambit

Amazon is negotiating a potential $50 billion investment in OpenAI, starting with $15B upfront and another $35B tied to either an IPO or achieving artificial general intelligence (AGI). The deal is part of OpenAI’s massive funding round that could exceed $100B at a staggering $730B valuation, with additional $30B commitments each from SoftBank and Nvidia.

OpenAI’s compute costs are projected to hit $665B over five years, pushing it toward public markets and deeper cloud partnerships, including expanded use of Amazon’s Trainium chips and custom AI models for Alexa. If OpenAI reaches AGI, Microsoft’s exclusive Azure hosting rights could loosen, unlocking huge strategic upside for Amazon.

Nvidia CEO Jensen Huang on AI's pressure on software stocks

AI Safety vs. Military Power: Friday Deadline

The Takeaway

👉 The Pentagon is actively surveying major defense contractors like Boeing and Lockheed Martin about their Claude dependency, signaling a potential "supply chain risk" designation that would force military partners to cut ties with Anthropic.

👉 Anthropic holds firm on two red lines: no mass domestic surveillance, and no autonomous weapons without human control, even as competitors xAI, Google, and OpenAI signal willingness to accept the Pentagon's "all lawful purposes" standard.

👉 The $200M contract at stake is small relative to Anthropic's $14B annual revenue, but a blacklist designation could ripple across its entire enterprise business, since many major corporations also hold defense contracts.

👉 Friday's deadline will set the template for every future negotiation between AI labs and the U.S. government, establishing whether tech companies or the military get the final say on how frontier AI is deployed.

The clock is ticking, and it expires tomorrow at 5:01 p.m. Defense Secretary Pete Hegseth has given Anthropic CEO Dario Amodei a hard deadline: drop your AI safety guardrails for military use or face consequences no American tech company has ever faced before.

Here's what's happening. The Pentagon wants to use Claude, currently the only AI model running in the military's classified systems, for "all lawful purposes," no questions asked. Anthropic says no to two things: mass surveillance of American citizens and autonomous weapons that fire without a human pulling the trigger. The Pentagon calls that unworkable. Anthropic calls it non-negotiable.

On Wednesday, the Pentagon contacted Boeing and Lockheed Martin to assess their reliance on Claude, a first step toward designating Anthropic a "supply chain risk," a label normally reserved for foreign adversaries like Huawei. Meanwhile, Elon Musk's xAI has already signed up for classified military work under the Pentagon's terms. Google and OpenAI are reportedly close behind.

This is bigger than one contract. It's the first real test of whether an AI company can set ethical boundaries with the most powerful military on earth, and survive. Could Anthropic's stand actually reshape how the entire industry navigates the tension between safety and national security?

Why it matters: This standoff will set the precedent for how much control AI companies retain over their own technology once the government comes knocking. The outcome could determine whether safety-first AI development remains commercially viable, or becomes a luxury no company can afford.

Sources:

🔗 https://www.axios.com/2026/02/25/anthropic-pentagon-blacklist-claude

The Lithium Boom is Heating Up

Thanks to growing demand, lithium stock prices grew 2X+ from June 2025 to January 2026. $ALB climbed as high as 227%. $LAC hit 151%. $SQM, 159%.

This $1B unicorn’s patented technology can recover 3X more lithium than traditional methods. That’s earned investment from leaders like General Motors.

Now they’re preparing for commercial production just as experts project 5X demand growth by 2040. They’ve announced what could be one of the US’ largest lithium production facilities and have rights to approximately 150,000 lithium-rich acres across North and South America.

Unlike public stocks, you can buy private EnergyX shares alongside 40,000+ other investors. Invest for $11/share by the 2/26 deadline.

This is a paid advertisement for EnergyX Regulation A offering. Please read the offering circular at invest.energyx.com. Under Regulation A, a company may change its share price by up to 20% without requalifying the offering with the Securities and Exchange Commission.

Matcha May Shield Your Aging Brain

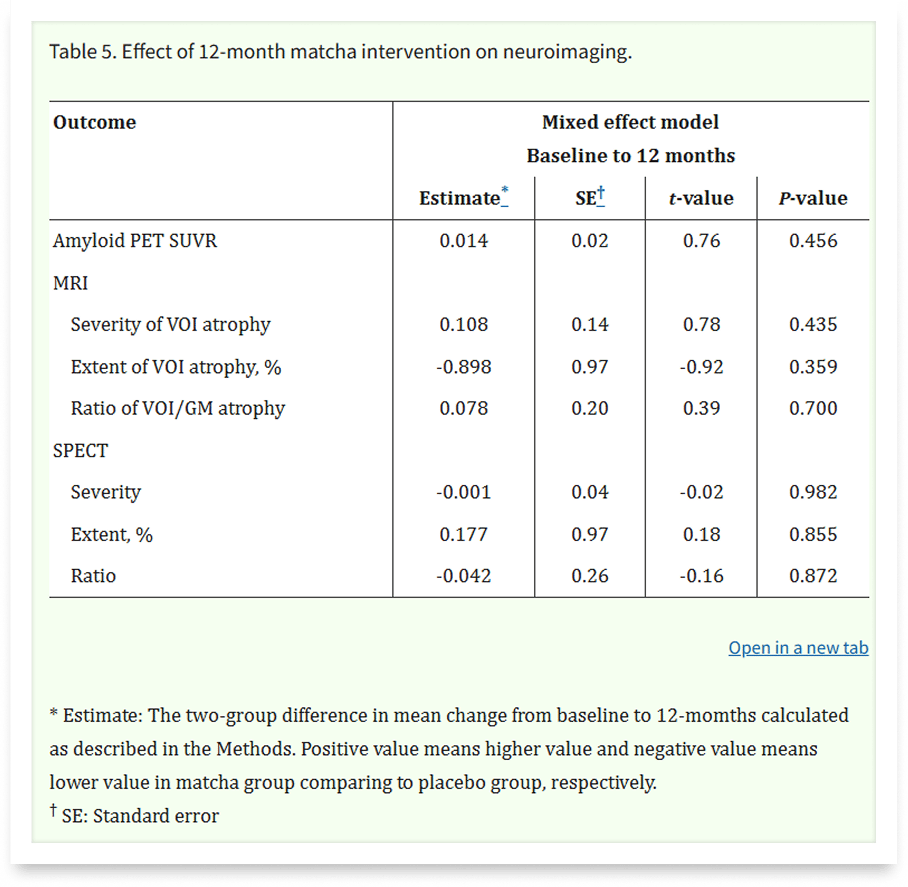

Two grams of matcha a day might keep cognitive decline at bay. A 12-month randomized clinical trial just delivered surprising evidence that this centuries-old Japanese tea can sharpen one of the brain's most vulnerable functions, and improve sleep in the process.

Researchers gave 99 older adults with early cognitive decline either daily matcha capsules or a placebo for an entire year. The matcha group showed significant improvement in social acuity, their ability to read facial emotions, which is one of the earliest skills to deteriorate as dementia sets in. On top of that, sleep quality trended toward meaningful improvement, despite matcha containing caffeine. The secret weapon? Theanine, an amino acid in matcha that appears to counteract caffeine's jittery effects while actively promoting rest.

Now the caveats: this was a small study, the primary cognitive tests didn't budge, and several authors work for ITO EN, one of Japan's biggest tea companies. Still, with dementia cases projected to hit 152.8 million globally by 2050, even modest prevention tools deserve attention.

Early cognitive decline currently has no cure, only lifestyle interventions that might slow it down. This study suggests matcha could be one of the easiest, most culturally accessible tools for keeping your brain sharp and your sleep deep as you age.

Turn AI Into Your Income Stream

The AI economy is booming, and smart entrepreneurs are already profiting. Subscribe to Mindstream and get instant access to 200+ proven strategies to monetize AI tools like ChatGPT, Midjourney, and more. From content creation to automation services, discover actionable ways to build your AI-powered income. No coding required, just practical strategies that work.