In Today’s Issue:

💻 OpenAI drops a major Codex update

🚀 Qwen releases an impressive open-weight 35B model

🧬 OpenAI signals a major shift toward vertical AI with GPT-Rosalind

⚖️ Anthropic launches Claude Opus 4.7

🪖 Google negotiates a massive deal to deploy Gemini inside the Pentagon

✨ And more AI goodness…

Dear Readers,

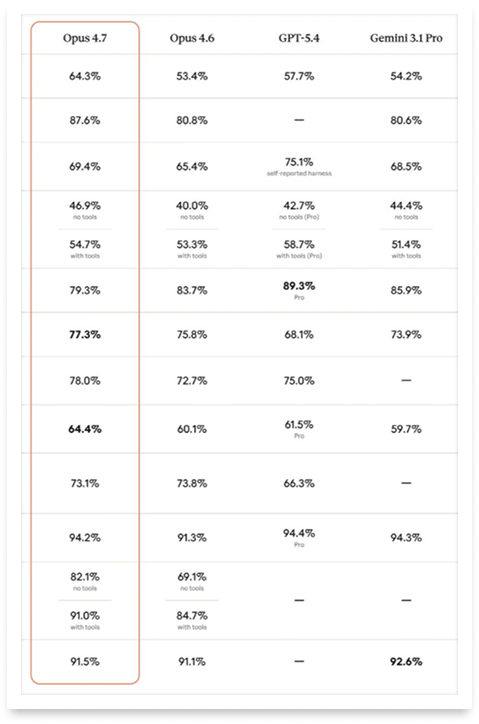

Anthropic just shipped Opus 4.7, and instead of a victory lap, the launch reads more like a trust exercise: yes, the benchmarks beat GPT-5.4 and Gemini 3.1 Pro, but the company itself concedes the model trails Mythos Preview, a system locked behind invite-only access, and developers on X are already calling 4.7 the "un-nerfed 4.6" after weeks of forensically documented performance drops in its predecessor. That tension, between what AI labs ship and what users actually experience over time, runs through today's entire issue.

OpenAI pushed two major releases in parallel: a Codex update that transforms the coding agent into something closer to a persistent AI colleague that can operate your computer, manage workflows across days, and remember your preferences, plus GPT-Rosalind, a life sciences reasoning model that signals the industry's quiet pivot toward vertical, tightly controlled systems sold to pharma and biotech behind enterprise gates.

Meanwhile, Qwen keeps shipping open-weight models that punch absurdly above their parameter count, and in our Daily Feature, we unpack Google's negotiations with the Pentagon to deploy Gemini inside classified networks, a full-circle moment from the company that once walked away from Project Maven after thousands of its own employees revolted. From shrinkflation drama to biotech verticalization to the return of military AI deals, today's issue is packed, so grab your coffee and scroll on.

All the best,

Kim Isenberg

Second release of the day: OpenAI’s Codex update transforms it from a coding assistant into a broader AI partner across the software lifecycle, capable of operating a computer, interacting with apps, generating images, and even managing ongoing tasks. With features like multi-agent workflows, memory, automations, and deep integrations (e.g., GitHub, Slack, JIRA), it increasingly acts as a persistent collaborator that can learn preferences, suggest next steps, and continue work over time.

This signals a move toward AI that doesn’t just assist with code, but actively coordinates, executes, and anticipates developer needs, hinting at a future where building software becomes more about direction than manual effort. Or in other words: OpenAI is showing where its journey is headed.

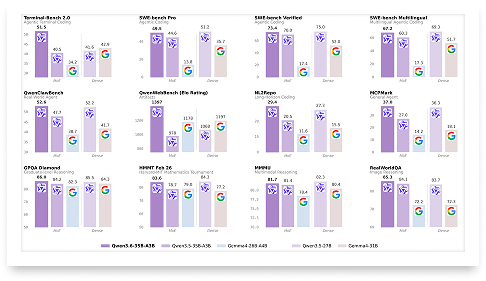

🚀 Qwen3.6-35B-A3B Debuts Open Coding Power

Today’s issue is all about new releases. Let’s start with Qwen3.6-35B-A3B. It is presented as Qwen’s first open-weight Qwen3.6 model, with a strong emphasis on agentic coding, repository-level reasoning, and a new thinking preservation feature designed to make iterative development more coherent and efficient. The release positions itself as a practical model for real-world use, pairing a 35B total / 3B activated MoE architecture, 262K native context, multimodal support, and broad deployment options across SGLang, vLLM, Transformers, and KTransformers.

What stands out is how deliberately this model is framed around developer workflow rather than just raw benchmark prestige: the documentation ties performance claims to concrete serving recipes, sampling guidance, long-context strategies like YaRN, and agent tooling through Qwen-Agent and Qwen Code. The benchmark tables suggest solid gains in coding and multimodal tasks, while the overall message is clear: Qwen wants this model to feel not only capable, but also usable in production-like settings.

Kudos to the Qwen-team. They keep on shipping amazing updates.

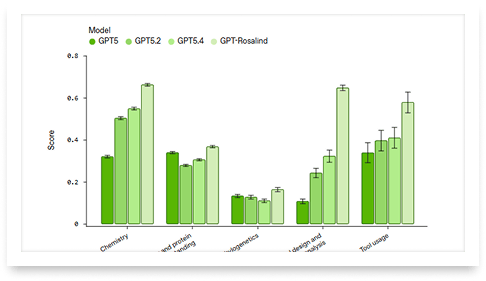

🧬 OpenAI Targets Biotech Verticalization

The third release looks important, but not quite a moonshot. GPT-Rosalind, a new life-sciences-focused reasoning model from OpenAI aimed at biology, drug discovery, and translational medicine seems less like a breakthrough science engine and more like a specialized reasoning layer for research workflows, useful for literature synthesis, tool use, experiment planning, and data interpretation, with benchmark gains that are credible but measured, not obviously transformative.

However, there is a deeper story: OpenAI is joining a broader move toward industry-specific frontier models, sold through trusted-access enterprise channels rather than open, general-purpose deployment. That makes Rosalind feel less like a flashy product launch and more like a signal that the AI business is shifting toward vertical, high-stakes, tightly controlled systems—especially in pharma and biotech, where workflow integration may matter as much as raw model intelligence.

Bloomberg's Context breaks down why Anthropic's new Mythos model has banks, tech giants, and governments scrambling over what it means for cybersecurity and the future of the internet.

Opus 4.7

The Takeaway

👉 Opus 4.7 tops GPT-5.4 and Gemini 3.1 Pro on coding and agentic benchmarks, but Anthropic itself concedes it trails Mythos Preview, a model locked behind invite-only access for cybersecurity partners.

👉 The release lands after weeks of "AI shrinkflation" accusations, with developers documenting measurable performance drops in Opus 4.6, making many dismiss 4.7 as a restoration rather than an upgrade.

👉 A new tokenizer inflates token counts by up to 35%, and higher default effort levels in Claude Code mean real-world costs will rise, even though per-token pricing stays flat.

👉 Whether Anthropic maintains Opus 4.7's launch-day performance over the coming weeks will be the actual test, power users are watching closely for any repeat of the degradation pattern.

Fourth release: Anthropic just shipped Claude Opus 4.7, and the mood is... complicated. On paper, the new flagship model beats Opus 4.6, GPT-5.4, and Gemini 3.1 Pro on key benchmarks, especially in agentic coding and multi-step engineering tasks. It processes images at 3x the resolution, follows instructions more literally, and can even verify its own outputs before reporting back. Sounds great, right?

Here's the catch: Anthropic openly admits Opus 4.7 is "less broadly capable" than Mythos Preview , the model they keep locked away behind Project Glasswing for select cybersecurity partners (however, expected). That admission has set the tone for the entire release. Gizmodo put it bluntly, framing the launch as basically a reminder of how impressive Mythos is.

On X and Reddit, the reception is even spicier. Developers are calling Opus 4.7 "the un-nerfed 4.6," suggesting this is simply the original model performance they were paying for before weeks of alleged degradation. The term "AI shrinkflation" keeps circulating, fueled by an AMD senior director's forensic analysis of over 6,800 Claude Code sessions showing a roughly 73% collapse in reasoning depth over recent months. A new tokenizer that can inflate token counts by up to 35% hasn't calmed those nerves either. Some even say it feels like a disgruntled employee doing their job sloppily.

Still, early testers who actually work with Opus 4.7 in production report meaningful gains, especially for long-running, autonomous coding tasks. The new "xhigh" effort level and task budgets give developers finer control over the quality-cost tradeoff. If Anthropic can resist the temptation to quietly "optimize" this model over time, Opus 4.7 could rebuild the trust that's been eroding. The real question: will the next few weeks prove the skeptics wrong, or will the nerfing cycle repeat?

So far however, a very mixed release.

Why it matters: Opus 4.7 arrives at a fragile moment for Anthropic's credibility with power users. Whether this release restores trust or deepens the "shrinkflation" narrative could define how developers choose their AI tools for the rest of 2026.

Sources:

🔗 https://www.anthropic.com/news/claude-opus-4-7

🔗 https://www.axios.com/2026/04/16/anthropic-claude-opus-model-mythos

🔗 https://www.reddit.com/r/ClaudeAI/comments/1snhfzd/claude_opus_47_is_a_serious_regression_not_an/

The IT strategy every team needs for 2026

2026 will redefine IT as a strategic driver of global growth. Automation, AI-driven support, unified platforms, and zero-trust security are becoming standard, especially for distributed teams. This toolkit helps IT and HR leaders assess readiness, define goals, and build a scalable, audit-ready IT strategy for the year ahead. Learn what’s changing and how to prepare.

This NVIDIA slide presents the fundamental economics of AI inference: cost per million tokens equals the GPU's hourly rental cost divided by its total hourly token output (tokens/sec × 3,600 seconds), multiplied by one million. It shows that the entire AI industry's economics hinge on maximizing tokens-per-second throughput, which is exactly why NVIDIA can sell ever-more-expensive GPUs while actually making AI cheaper to run.

From Maven Walkout to Gemini

Google is negotiating to deploy its Gemini AI models inside the Pentagon's classified networks. According to The Information, the deal would allow the military to use Gemini for "all lawful purposes," a framework mirroring the contract OpenAI signed earlier this year. Google has proposed additional contract language to prevent use for domestic mass surveillance or autonomous weapons without human oversight. However, legal experts have noted that similar clauses in OpenAI's deal may not effectively block those applications, since the "all lawful purposes" framing could override specific restrictions.

This represents a significant shift. In 2018, over 3,000 Google employees protested the company's involvement in Project Maven, the Pentagon's drone-targeting AI program, and Google walked away. The fallout damaged the company's relationship with defense officials for years. Since then, Google has moved step by step back toward the military: it built a dedicated Public Sector unit staffed with defense veterans, removed explicit bans on weapons and surveillance AI from its principles in early 2025, and won a $200 million AI pilot contract with the Department of Defense last year.

The timing adds complexity. While Google pursues deeper Pentagon ties, Anthropic is fighting the military in court after being labeled a "supply chain risk" for refusing to drop its red lines on surveillance and autonomous weapons. More than 200 Google and OpenAI employees signed letters supporting Anthropic's stance, and Google's chief scientist Jeff Dean filed an amicus brief in Anthropic's defense. Google still trails AWS and Microsoft in government cloud market share, and internally, its Public Sector unit has been told it needs to grow faster, making this deal as much a commercial imperative as a strategic one.

Take control of your chaotic inbox

Stop drowning in spam. Proton Mail keeps your inbox clean, private, and focused—without ads or filters.