In Today’s Issue:

🗣️ OpenAI launches new voice models

🛡️ The US government begins screening frontier AI models

🩺 Google beats Apple to the punch with a Gemini-powered AI health coach

🐞 Mozilla deploys Anthropic’s Mythos as an autonomous bug hunter

✨ And more AI goodness…

Dear Readers,

AI is no longer answering questions - it's running systems.

Today's stories share a quiet but significant shift: AI models are moving from assistants into operators. OpenAI's new voice models don't just transcribe - they reason mid-conversation and call tools in real time. AlphaEvolve isn't writing code for developers - it's redesigning Google's next-generation TPUs. Mozilla isn't asking AI to suggest fixes - it's deploying it as an autonomous bug hunter across Firefox's codebase. And Washington isn't debating AI policy in the abstract anymore - it's screening frontier models before release. The pattern is clear: AI is being handed not just tasks, but agency over critical infrastructure, from chip design to national security. The question now isn't whether AI can help. It's who controls the

All the best,

Kim Isenberg

Google Releases an AI Coach in Its New Health App, Beating Apple to the Punch

Google has launched an AI health coach powered by Gemini, one that works with all your health data. This is the first real step towards a personal AI doctor and coach, and it signals that personalized AI medicine is finally becoming real. Google is turning the Fitbit app into Google Health, a single hub for fitness, sleep, nutrition, cycle tracking, vital signs, and even U.S. medical records. Fitbit stays the hardware core, while the app becomes the home base for Gemini-powered coaching and the new Fitbit Air ecosystem.

All this before Apple moves forward with its revamped Siri and a similar concept.

tl;dr: Google launched a Gemini-powered AI health coach that pulls from all your health data, effectively turning Fitbit into a 24/7 personalized medical hub before Apple can ship anything comparable.

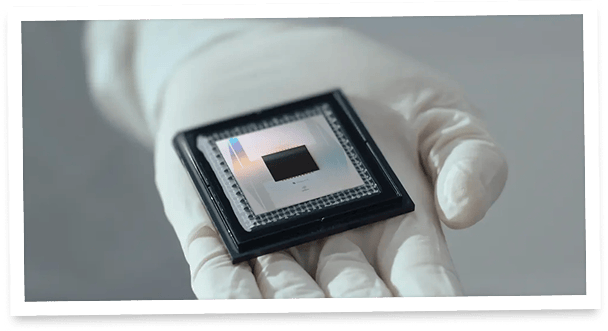

🧬 AlphaEvolve Designs Faster Infrastructure

Google DeepMind says AlphaEvolve, its Gemini-powered coding agent, has moved from experimental algorithm designer to a system shaping genomics, power grids, quantum simulations, mathematics, and commercial optimization. One of the most revealing details is inside Google itself: “AlphaEvolve has been used as a regular tool to optimize the design of the next generation of TPUs,” and it discovered more efficient cache replacement policies in two days, work that had previously taken months of intensive human effort.

👉 tl;dr: AlphaEvolve is being framed as a general-purpose AI optimizer that is already improving Google’s next-generation TPU design and speeding up infrastructure breakthroughs.

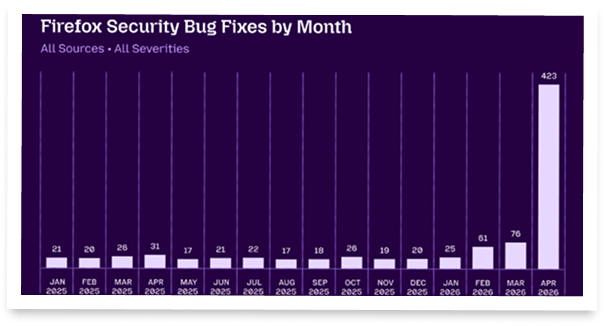

🛡️ Mozilla Tests AI Bug-Hunting Claims

Mozilla says Anthropic’s Mythos helped uncover 271 Firefox security flaws in two months, with engineers arguing the breakthrough came from pairing stronger AI models with a custom harness that gave the system clear goals, testing tools, and verification loops. The claim is important because it pushes back against fears of AI-generated security “slop,” but the debate remains tense: critics want proof beyond selected Bugzilla reports, clearer economics, and answers about whether attackers will gain the same speed and scale.

👉 tl;dr: Mozilla says AI-assisted vulnerability hunting is finally useful when tightly guided and verifiable, but skepticism over hype, costs, and attacker misuse remains.

Ask AI to turn a messy topic into a simple decision map before you act.

Why it helps: Today’s AI news is full of complex tradeoffs: health data, AI security, voice agents, government testing. A decision map helps you see options, risks, and next steps clearly instead of just reacting to the headline.

Try this: “Help me think through this decision: [insert situation]. Give me 3 options, the upside of each, the downside of each, what I might be missing, and the smartest next step if I want to be careful but not overthink it.”

🎬 Watch This

Anthropic Research Warns AI Could Build Itself by 2028

Why it’s worth your time:

This is one of the clearest mainstream interviews yet on what Anthropic calls a potential “intelligence explosion”: the moment when AI systems begin accelerating AI research itself. Jack Clark argues there is a 60%+ chance that AI could meaningfully help build better versions of itself by the end of 2028.

Best bit:

The strongest part is Clark’s explanation of why this is no longer just a sci-fi scenario. He frames self-improving AI less as a sudden magical event and more as an R&D acceleration loop: models help write code, run experiments, train successors, and compress months of AI research into much shorter cycles.

Watch if you care about:

AI safety / frontier labs / intelligence explosion / recursive self-improvement / geopolitics / the future of work

OpenAI's Voice Models Think Now

The Takeaway

👉 OpenAI launched three new realtime voice models: GPT-Realtime-2 brings GPT-5-level reasoning to live conversations, GPT-Realtime-Translate covers 70+ languages in real time, and GPT-Realtime-Whisper streams transcription as people speak.

👉 GPT-Realtime-2 quadruples the context window to 128K tokens and introduces adjustable reasoning effort, parallel tool calling, and audible status cues, making voice agents viable for complex, multi-step workflows.

👉 Zillow saw a 26-point improvement in call success rates on adversarial benchmarks, signaling that voice agents are reaching production-grade reliability for regulated industries like real estate.

👉 The broader strategic shift: voice is evolving from a simple input method into a reasoning interface, where AI can listen, think, act, and translate simultaneously, reshaping how enterprises build customer-facing products.

Voice AI just got a brain upgrade. OpenAI dropped three new realtime audio models that could fundamentally change how we interact with software, and the flagship, GPT-Realtime-2, is the first voice model powered by GPT-5-class reasoning. That means it doesn't just listen and respond. It thinks, calls tools, recovers from interruptions, and keeps a conversation coherent across a 128K token context window, four times larger than its predecessor.

Alongside it, GPT-Realtime-Translate handles live translation across 70+ input languages into 13 output languages, while GPT-Realtime-Whisper delivers streaming transcription as words are spoken. Early adopters like Zillow, Deutsche Telekom, and Priceline are already building production agents on top of this stack. Zillow reported a 26-point jump in call success rates on adversarial benchmarks after prompt optimization. The real shift here isn't just better voice recognition.

It's voice as a reasoning interface: an AI that listens, understands intent, takes action, and adjusts tone in real time. If voice agents can now genuinely think while they talk, what does the keyboard-first software era look like in two years?

Why it matters: OpenAI is turning voice from a simple input method into a full reasoning layer, enabling AI agents that can listen, think, and act simultaneously. This sets a new baseline for what developers and enterprises will expect from conversational AI products.

Investors see ANOTHER return from Masterworks (!!!!)

That’s 6 sales in 7 months. 29 all time. And the performance?

16.5%, 17.6%, and 17.8%, net annualized returns on sold works held longer than one year (See all 29 at Masterworks.com)

It’s not from stocks, private equity, or real estate… it’s from contemporary and post war art. Crazy, right?

With Masterworks, you don’t need to be a BILLIONAIRE to invest in multi-million dollar art anymore.

Historically, the segment overall has had attractive appreciation and low correlation to stocks.*

Masterworks targets works featuring legends like Banksy, Basquiat, and Picasso, identifying what they believe to have significant long-term appreciation potential, not just at the artist level but at the level of individual artworks.

As one of the largest players in the art market, with $1.3 billion invested over 500 artworks, they pass critical advantages through to their 70,000+ members to add art to their portfolios strategically.

Looking to diversify your investments in 2026?

*According to Masterworks data. Investing involves risk. Past performance is not indicative of future returns. See important Reg A disclosures at masterworks.com/cd.

The chart: Claude’s mobile app usage is accelerating fast, with MAUs jumping from 65.4M to 85.8M in one month.

The lesson: Claude is no longer just an enterprise/API story, it is becoming a mainstream consumer AI product.

The caveat: Downloads grew much slower than active users, so the surge may be driven more by retention, reactivation, and heavier existing usage than by a massive wave of new installs.

Title

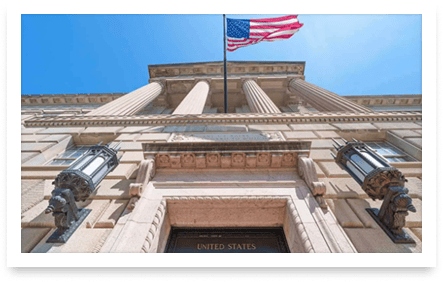

⚡ Bottom line: Microsoft, Google and xAI will let the US government test their most powerful AI models before public release.

💡 Why it matters: With AI systems growing more capable, this formal screening pipeline gives regulators a real chance to catch risks early.

🔎 What it means: Pre-deployment government testing could become the default trust framework for frontier AI worldwide.

The US government just got a front-row seat to AI's most powerful models. Microsoft, Google and xAI have signed agreements with the Center for AI Standards and Innovation (CAISI) to let federal officials test their frontier AI systems before public release. The goal: identify national security risks, from cyberattacks to military misuse, before these tools hit the wild.

(The commerce department’s CAISI agency facilitates collaboration between tech companies and the federal government.)

CAISI, sitting under the Department of Commerce, will conduct pre-deployment evaluations and targeted research to assess frontier AI capabilities. The agency has already completed over 40 evaluations, including on unreleased state-of-the-art models. Developers even hand over versions with safety guardrails removed so testers can probe for real vulnerabilities. The partnerships were partly motivated by growing concerns around Anthropic's Mythos model, which the company itself said is "far ahead" of competitors in cybersecurity capabilities.

This comes just days after the Pentagon signed separate deals with eight tech giants, including SpaceX and Nvidia, to deploy AI across classified military networks. Anthropic was notably absent from that list after the Pentagon designated it a supply chain risk earlier this year. For the first time, the US government has a formal pipeline to stress-test the most powerful AI models before the public ever touches them. This sets a precedent that could shape how every major democracy governs frontier AI going forward.

Real-World Ads, Simple to Run

With AdQuick, executing Out Of Home campaigns is as easy as running digital ads. Plan, deploy, and measure your real-world advertising effortlessly — so your team can scale campaigns and maximize impact without the headaches.