In partnership with

In Today’s Issue:

🤖 Shanghai-based MiniMax just released an AI model that actively handles its own R&D and rewrites its own code to self-improve

🌍 Anthropic’s massive 81,000-person global study reveals that lower-income countries are actually the most optimistic about AI's potential

🥽 Meta is officially pulling the plug on Horizon Worlds for Quest VR

💼 Anthropic is capturing 73% of first-time AI spending and leaving OpenAI scrambling

✨ And more AI goodness…

Dear Readers,

A Shanghai lab just handed an AI model its own R&D toolkit and told it to make itself better - and it did, running over 100 optimization loops, rewriting its own code, and earning gold medals in ML competitions, all without a human touching the keyboard.

MiniMax M2.7 is today's featured deep dive, and it forces a uncomfortable question: what happens when the bottleneck in AI progress is no longer human brainpower but GPU availability? Beyond self-evolving models, today's issue brings you Anthropic's massive 81,000-person study revealing that the people most optimistic about AI aren't in Silicon Valley - they're in lower-income countries seeing it as a ladder up. Meanwhile, Meta is pulling the plug on its metaverse VR experiment (cost so far: billions), and fresh data shows Anthropic is now capturing 73% of first-time enterprise AI spending, flipping a lead OpenAI held just weeks ago.

Grab your coffee — this one's dense.

All the best,

🤖 81,000 Voices Map AI

Anthropic’s massive study of 80,508 Claude users across 159 countries and 70 languages shows that people don’t fit neatly into “pro-AI” or “anti-AI” camps, most hold both excitement and fear at the same time. The biggest hopes center on professional excellence (18.8%), personal transformation (13.7%), and life management (13.5%), while the biggest concerns are unreliability (26.7%), jobs and the economy (22.3%), and autonomy loss (21.9%), revealing that AI’s biggest strengths, speed, support, and accessibility - are often the same things people worry could backfire.

The standout insight is that AI is already delivering for many users, with 81% saying it has taken a real step toward their goals, especially through productivity (32.0%), cognitive partnership (17.2%), and learning (9.9%). But the report’s real punch is global: optimism is higher in lower- and middle-income regions, where AI is seen more as a ladder to opportunity, while wealthier regions are more worried about displacement, governance, and privacy, making this one of the clearest people-first snapshots yet of how AI is reshaping work, learning, wellbeing, and daily life.

🚪 Meta Ends VR Social Era

Meta is shutting down Horizon Worlds on Quest VR, pulling the app from the Quest store by the end of March and ending VR access on June 15, while keeping it alive only as a mobile app. The move highlights how sharply Meta is pivoting away from its metaverse dream after Horizon Worlds struggled to attract more than a few hundred thousand monthly users and Reality Labs posted a $6.02 billion operating loss in the latest quarter. Meta has somewhat miscalculated with the Metaverse overall, and it could turn out to be Mark Zuckerberg's most costly undertaking.

💼 Anthropic Beats OpenAI in Enterprise

Anthropic is surging in enterprise AI adoption, grabbing 73.3% of first-time AI tool spending by February 2026, while OpenAI dropped to 26.7% after leading just weeks earlier. The AI race is no longer only about model quality but it is increasingly about who can win and monetize enterprise customers faster, even as OpenAI still projects higher overall revenue at $25 billion versus Anthropic’s $19 billion.

It is no coincidence that OpenAI recently announced a shift away from "side quests" to focus more heavily on the business and enterprise market. It is high time.

Form & Function of Enterprise Humanoid Design by Boston Dynamics

MiniMax Just Built an AI That Upgrades Itself — And It's Terrifyingly Good At It

The Takeaway

👉 MiniMax M2.7 autonomously handled 30–50% of its own R&D workflow, including writing RL tools, running experiments, and optimizing code across 100+ iteration loops - a concrete step toward recursive AI self-improvement.

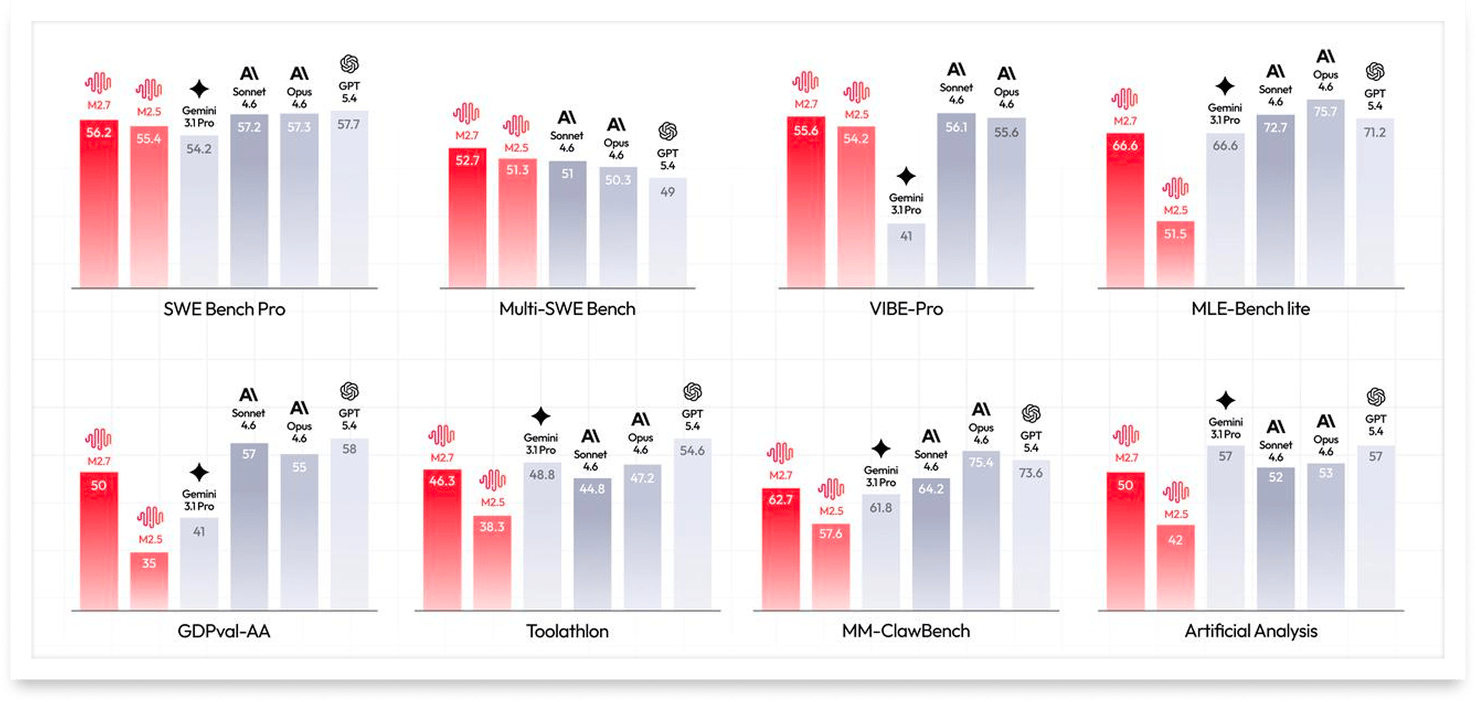

👉 Benchmark scores put M2.7 near frontier-level performance (SWE-Pro 56.22%, VIBE-Pro 55.6%), making it a serious contender against western incumbents at presumably lower cost.

👉 The shift to a proprietary model breaks with China's recent open-source trend, suggesting MiniMax sees competitive advantage in keeping its best capabilities closed.

👉 Native multi-agent collaboration and 97% skill adherence across 40+ complex tools signal that M2.7 is built for production-grade autonomous workflows, not just demos.

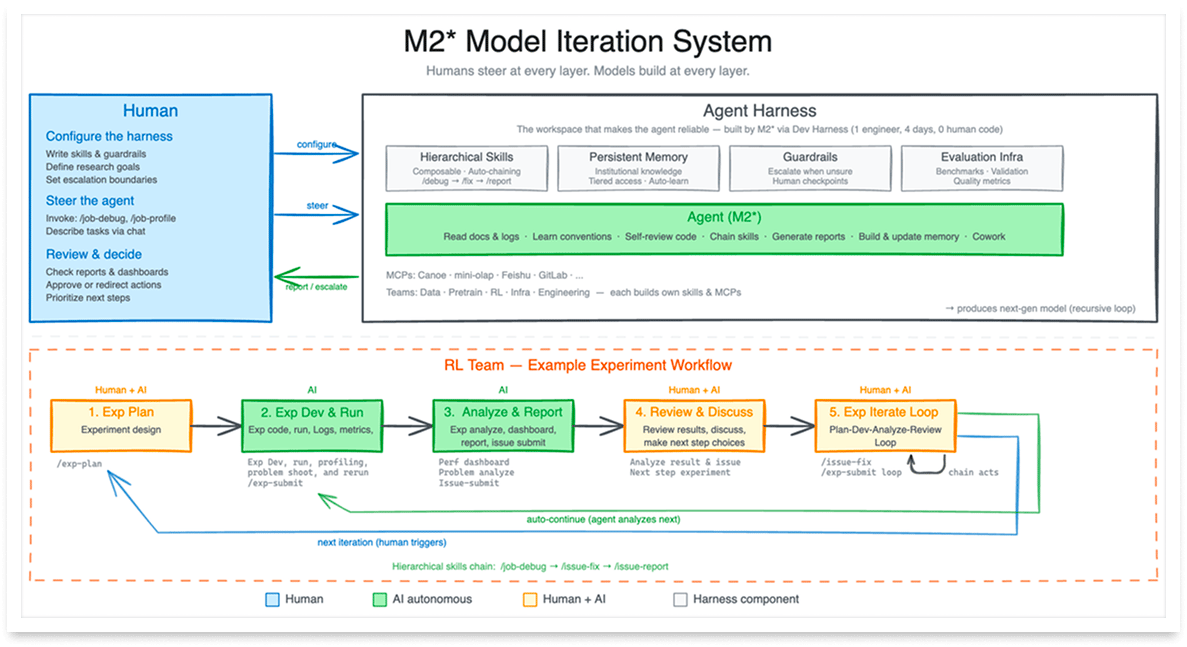

Shanghai-based AI startup MiniMax just dropped M2.7, and this one's different: it's the first model that actively participated in building itself. Not metaphorically, M2.7 literally wrote its own reinforcement learning tools, ran experiments, analyzed results, and optimized its own training pipeline. MiniMax says the model now handles 30–50% of its R&D workflow autonomously (more on that in today’s daily feature)

The results speak for themselves. On SWE-Pro, a benchmark for real-world programming tasks, M2.7 scored 56.22%, putting it within striking distance of top models like Opus 4.6. In one internal test, M2.7 ran over 100 autonomous optimization loops - analyzing failures, rewriting code, evaluating results - and delivered a 30% performance boost with zero human intervention. MiniMax also claims the model can reduce production incident recovery time to under three minutes.

Perhaps most striking: M2.7 is proprietary, signaling that Chinese AI startups - long champions of open source, especially since Meta gave up on open source with Llama- are starting to keep their best cards close. With native multi-agent collaboration and 97% skill adherence across complex tasks, M2.7 isn't just a coding tool — it's an autonomous colleague.

Why it matters: M2.7 represents a fundamental shift from AI as a passive tool to AI as an active participant in its own improvement cycle. If recursive self-evolution works at scale, the pace of AI progress could accelerate far beyond what human-only research teams can match.

Sources:

🔗 https://www.minimax.io/news/minimax-m27-en

🔗 https://platform.minimax.io/docs/guides/text-generation#minimax-m2-7-key-highlights

What keeps AI companies growing

When AI user growth outpaces infrastructure, teams fight fires instead of building products.

Stripe’s AI scaling playbook shows how ElevenLabs, Runway, and Leonardo AI eliminated operational drag and turned growth into a feature, not a fire.

“MiniMax M2.7 is ranked #8 in Code Arena. It’s also the most cost-efficient of the top 10 at $0.30 / $1.20 per MToken.”

Self-Evolving AI Arrives

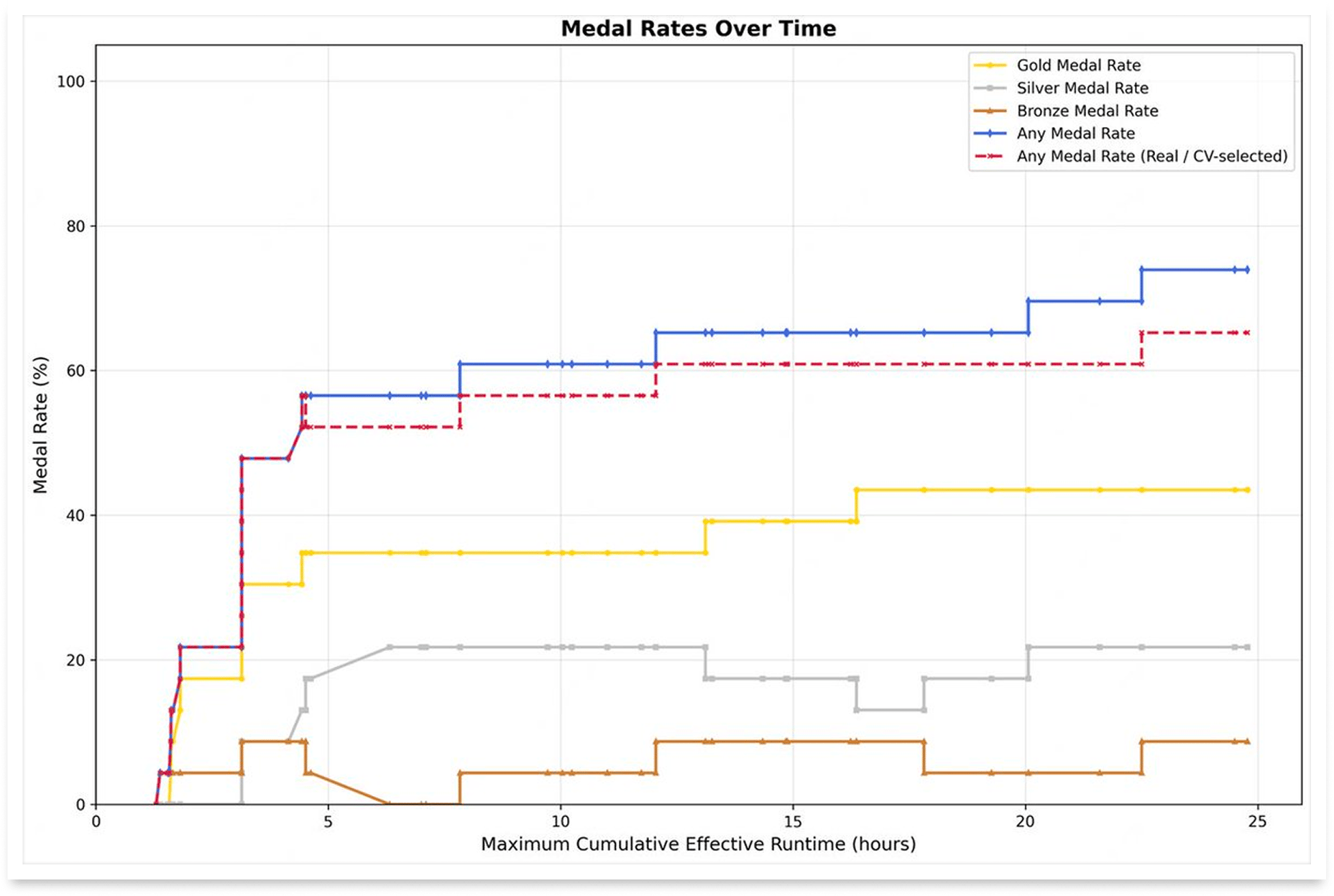

MiniMax didn't just build M2.7, they let it loose to compete against the world's best AI, unsupervised. The company entered M2.7 into 22 machine learning competitions on OpenAI's MLE Bench Lite, giving it 24 hours per trial to autonomously train, evaluate, and improve ML models on a single A30 GPU. The results are remarkable: the best run earned 9 gold medals, 5 silver, and 1 bronze, averaging a 66.6% medal rate - tying Gemini 3.1 and trailing only Opus 4.6 and GPT-5.4.

What's fascinating is the mechanism behind it. MiniMax built a simple three-module harness: short-term memory, self-feedback, and self-optimization. After each round, the model writes a memory file and performs self-criticism on its results, then feeds those insights into the next iteration. Think of it like an athlete reviewing game tape between matches, except this athlete redesigns its own training program after every session. The model doesn't just learn from mistakes, it restructures how it learns.

MiniMax envisions this transitioning toward full autonomy, where models coordinate data construction, training, inference architecture, and evaluation without human involvement. That’s a paradigm shift.

M2.7's self-evolution loop - memory, self-criticism, autonomous optimization - offers a concrete blueprint for how AI could soon drive its own improvement cycles. If this scales, the bottleneck in AI progress shifts from human research bandwidth to compute availability.

Stop typing prompts. Start talking.

You think 4x faster than you type. So why are you typing prompts?

Wispr Flow turns your voice into ready-to-paste text inside any AI tool. Speak naturally - include "um"s, tangents, half-finished thoughts - and Flow cleans everything up. You get polished, detailed prompts without touching a keyboard.

Developers use Flow to give coding agents the context they actually need. Researchers use it to describe experiments in full detail. Everyone uses it to stop bottlenecking their AI workflows.

89% of messages sent with zero edits. Millions of users worldwide. Available on Mac, Windows, iPhone, and now Android (free and unlimited on Android during launch).