In Today’s Issue:

💻 OpenAI unveils a groundbreaking model, GPT 5.4

🚨 The U.S. government officially designates Anthropic as a national security risk

💼 Anthropic's new labor market report reveals that AI's real-world impact on jobs is far more gradual than the hype suggests

⚖️ New York lawmakers advance a bill to ban AI chatbots

✨ And more AI goodness…

Dear Readers,

Today, the U.S. government slapped Anthropic with a label it usually reserves for foreign adversaries - "supply chain risk to national security" - all because the company refused to let the Pentagon use Claude without restrictions on autonomous weapons and mass surveillance.

That alone would make this a landmark day in AI, but there's more: OpenAI just launched GPT-5.4, a model that doesn't just answer your questions but actually operates your computer, hitting 75% on OSWorld and outperforming human office workers in 83% of professional tasks.

Meanwhile, New York lawmakers are pushing to ban chatbots from giving legal and medical advice, Anthropic's new labor market report reveals that AI's real-world job impact is far more gradual than the hype suggests, and OpenAI is building a voice model that doesn't wait for you to stop talking. Grab your coffee - today's issue covers everything from the first AI company to defy the Pentagon to the model that might just replace your coworker.

All the best,

Superintelligence held an exclusive Interview with Keith Peiris, CEO of Lightfield. Check it out 😊

🚀 OpenAI Advances Real-Time Voice AI

OpenAI is developing a new “bidirectional” (BiDi) audio model designed to make AI voice assistants far more natural. Unlike today’s turn-based systems that wait for users to finish speaking, BiDi continuously processes audio and can adapt responses in real time when interrupted, enabling smoother, human-like conversations for tasks like customer support or smart assistants.

The prototype still has issues - after several minutes it can glitch or produce abnormal voices - so the launch may slip from Q1 to Q2 2026, but if successful it could significantly narrow the gap between voice and text AI performance and accelerate global adoption of voice-first AI devices.

🤖 AI Work Impact Still Emerging

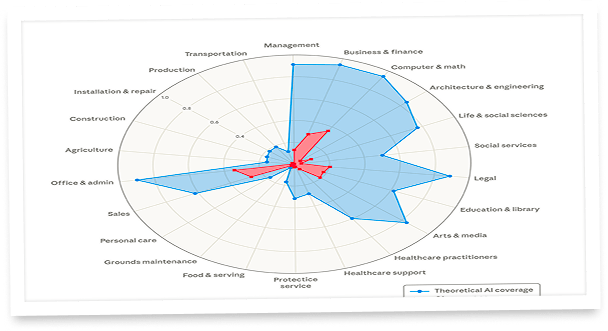

Anthropics new “Labor market impacts of AI” report introduces a new “observed exposure” measure that tracks not just what AI could do, but how it is actually being used in work today. It finds that real-world AI adoption is still far below its technical potential, even in jobs like programming, customer support, and data entry.

Highly exposed occupations are expected to grow a bit more slowly through 2034, but the data does not show a clear unemployment spike after 2022. The most notable signal is at the entry level, where young workers in highly exposed roles may be seeing weaker hiring outcomes. Overall, the message is cautious but not alarmist: AI is reshaping the labor market gradually, and the biggest near-term risk may be reduced access to first jobs.

⚖️ New York Moves Against Chatbots

New York lawmakers are weighing a bill that would ban AI chatbots from giving legal or medical advice when that crosses professional licensing lines, especially if the bots present themselves like doctors or lawyers. The proposal also boosts accountability in a big way: companies would need to clearly disclose that users are talking to AI, and people could sue for damages and attorney’s fees if the rules are broken.

This is a strong signal that AI regulation is shifting from soft transparency rules to real enforcement, with a 6–0 committee vote, a 90-day implementation window after signing, and a private right of action that could raise the stakes for every chatbot platform operating in New York. However, such a bill would be hostile to the future because AI is continuously improving and increasing the benefits to society.

GPT-5.4: Your New AI Coworker

The Takeaway

👉 GPT-5.4 is OpenAI's first general-purpose model with native computer-use capabilities, achieving 75% on OSWorld - above the 72.4% human baseline - signaling that AI agents can now reliably operate software autonomously.

👉 Token efficiency improved by up to 47%, with a 1M context window and new Tool Search feature, making AI-powered workflows dramatically faster and cheaper for developers and enterprises.

👉 The model outperformed professionals in 83% of knowledge work tasks across 44 occupations on OpenAI's GDPval benchmark, with 33% fewer factual errors - raising serious questions about near-term white-collar job disruption.

👉 With Anthropic's Cowork, Google's Gemini updates, and now GPT-5.4 all launching within days, the competition to dominate enterprise AI workflows has reached its most intense phase yet - expect rapid iteration and aggressive pricing moves.

OpenAI just dropped a model that doesn't just think - it works. GPT-5.4, released today, is the company's most capable AI system for professional tasks, and it's setting a new standard for what AI agents can actually do in the real world. GPT-5.4 is the first general-purpose OpenAI model with native computer-use capabilities. That means it can operate software, navigate browsers, fill out spreadsheets, and complete multi-step workflows across applications - all on its own. On the OSWorld benchmark, which tests desktop navigation, it hit a 75% success rate, surpassing human performance at 72.4%. Let that sink in.

But it's not just about raw capability. GPT-5.4 uses dramatically fewer tokens to solve problems - up to 47% fewer in tool-heavy workflows - making it faster and cheaper to run. It supports up to 1 million tokens of context, handles spreadsheets and presentations with near-expert quality, and produces 33% fewer factual errors than its predecessor. For developers, the new Tool Search feature lets agents dynamically discover the right tools from massive ecosystems instead of loading everything upfront.

This is a pivotal moment: the gap between AI assistance and AI agency just got razor-thin. If GPT-5.4 can already outperform office workers in 83% of professional tasks — what does the next iteration look like?

Why it matters: GPT-5.4 marks the first time a mainstream AI model can genuinely operate computers at or above human level, turning the theoretical promise of AI agents into a practical reality. This release accelerates the race between OpenAI, Anthropic, and Google toward autonomous AI systems that don't just answer questions — they complete entire workflows.

Sources:

🔗 https://openai.com/index/introducing-gpt-5-4/

The Year-End Moves No One’s Watching

Markets don’t wait — and year-end waits even less.

In the final stretch, money rotates, funds window-dress, tax-loss selling meets bottom-fishing, and “Santa Rally” chatter turns into real tape. Most people notice after the move.

Elite Trade Club is your morning shortcut: a curated selection of the setups that still matter this year — the headlines that move stocks, catalysts on deck, and where smart money is positioning before New Year’s. One read. Five minutes. Actionable clarity.

If you want to start 2026 from a stronger spot, finish 2025 prepared. Join 200K+ traders who open our premarket briefing, place their plan, and let the open come to them.

By joining, you’ll receive Elite Trade Club emails and select partner insights. See Privacy Policy.

Anthropic Said No. Now It’s War.

The U.S. government just treated one of America’s most important AI companies the way it normally treats Huawei. Anthropic has officially been designated a “supply chain risk to national security” by the Department of War - making it the first American company ever to receive this label. CEO Dario Amodei confirmed the company will challenge the designation in court.

The backstory is dramatic. Anthropic refused to give the Pentagon unrestricted access to Claude for “all lawful purposes,” insisting on two red lines: no fully autonomous weapons and no mass domestic surveillance of Americans. The Pentagon wanted no limits. When negotiations collapsed, Defense Secretary Pete Hegseth called Anthropic’s position “a master class in arrogance and betrayal.” Hours later, OpenAI swooped in to sign its own deal - sparking protests at its San Francisco headquarters, a “QuitGPT” boycott movement, and an open letter from over 900 Google and OpenAI employees backing Anthropic’s stance.

Amodei’s latest statement strikes a conciliatory tone. He argues the designation is legally narrow - applying only to Claude’s use in direct Pentagon contracts, not to all business relationships. Microsoft’s lawyers agree: Anthropic products remain available to their customers outside of defense work. Meanwhile, 30 former military and intelligence officials have written to Congress warning of a “dangerous precedent.”

”Microsoft, one of Anthropic’s biggest partners, agreed. A spokesperson told CNN: “Our lawyers have studied the designation and have concluded that Anthropic products, including Claude, can remain available to our customers – other than the Department of War – through platforms such as M365, GitHub, and Microsoft’s AI Foundry and that we can continue to work with Anthropic on non-defense related projects.”

— CNN

This is bigger than one contract dispute. It’s the first real test of whether an AI company can draw ethical lines with the world’s most powerful military - and survive. If Anthropic wins in court, it could reshape how every tech company negotiates with the government. If it loses, the message is clear: comply or be crushed.

Tired of news that feels like noise?

Every day, 4.5 million readers turn to 1440 for their factual news fix. We sift through 100+ sources to bring you a complete summary of politics, global events, business, and culture — all in a brief 5-minute email. No spin. No slant. Just clarity.