In Today’s Issue:

🧠 OpenAI drops GPT-5.4 mini and nano

⚡ Mistral fires back with a highly efficient 119B-parameter hybrid model

🧪 Scientists in Edinburgh successfully engineered bacteria to transform everyday plastic waste into a life-saving Parkinson's medication

🐕 Robot dogs are now patrolling massive AI data centers

✨ And more AI goodness…

Dear Readers,

OpenAI just dropped GPT-5.4 mini and nano, and the message is clear: near-flagship intelligence no longer requires flagship prices, or flagship patience. While that reshapes how developers build agentic systems from the ground up, today's issue pulls you across the full spectrum of what's moving in tech right now.

Mistral fires back with a 119B-parameter hybrid model that cuts completion time by 40%. SK's chairman warns the memory chip crunch won't ease until 2030, squeezing everything from laptops to data centers. Scientists in Edinburgh are turning PET plastic into Parkinson's medication using engineered bacteria, yes, your old water bottle might one day save lives. Robot dogs now patrol the sprawling campuses of AI data centers, and Palmer Luckey sits down to talk AI, nukes, and the war in Iran.

Grab your coffee and scroll - this one's packed.

And of course, we will continue to be present at the GTC and will keep you updated.

All the best,

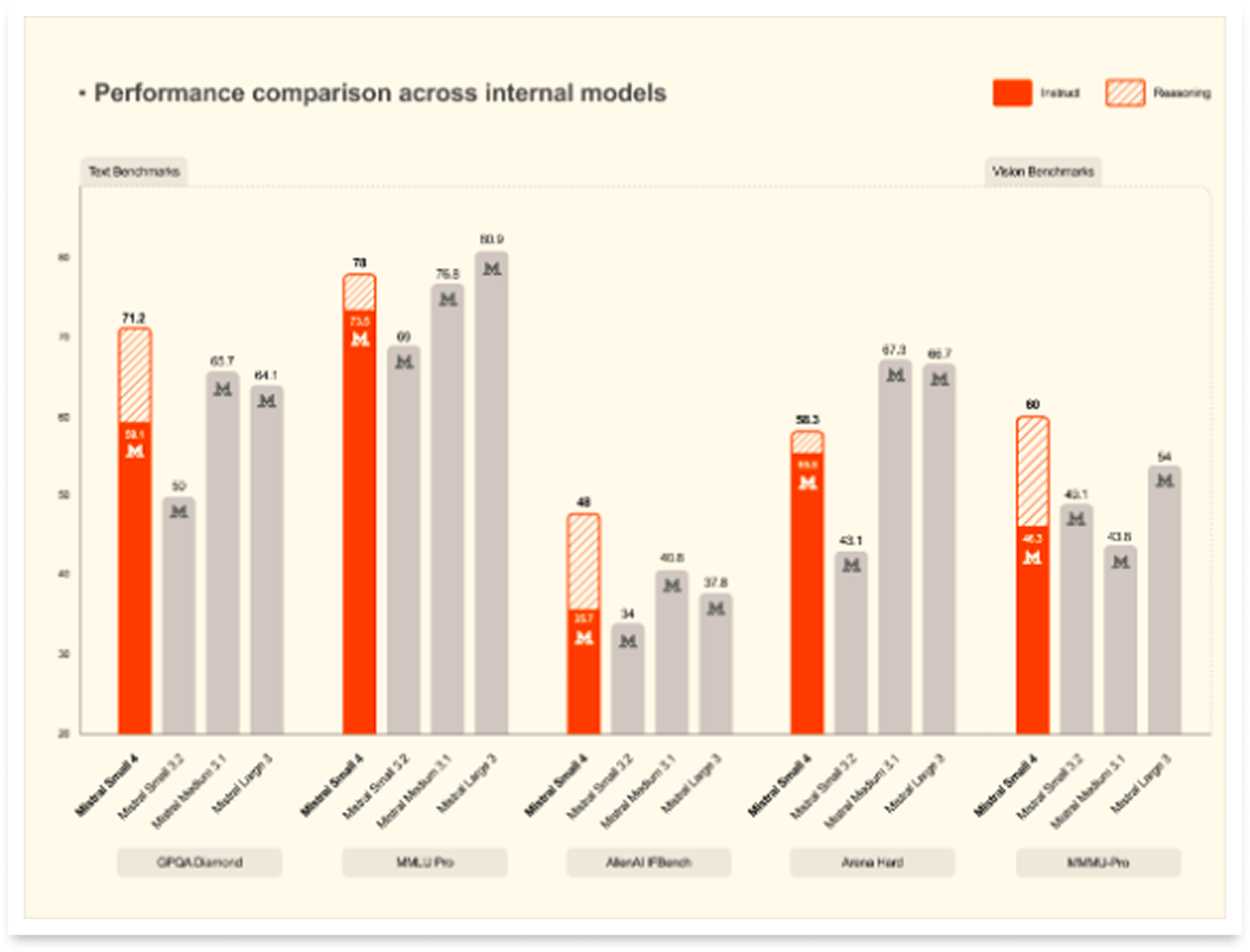

⚡ Mistral Combines Multiple AI Roles

Mistral Small 4 is a 119B-parameter hybrid MoE model with 128 experts, 4 active experts per token, a 256k context window, multimodal text+image input, and switchable reasoning modes for both fast replies and deeper problem-solving. The big win is efficiency: Mistral says it cuts end-to-end completion time by 40%, serves 3x more requests per second than Mistral Small 3 in throughput-optimized setups, and stays competitive with larger reasoning models while using much shorter outputs, great news for lower latency, lower cost, and better user experience.

It is positioned as a strong all-rounder for chat assistants, coding, agents, document understanding, and research workflows, with Apache 2.0 licensing making it especially attractive for enterprises and developers who want open-weight flexibility. Deployment support is centered on vLLM and Transformers, with extras like speculative decoding and NVFP4 quantization pushing efficiency even further.

🧪 Plastic Powers Parkinson’s Drug

Scientists at the University of Edinburgh engineered E. coli to turn PET plastic waste into L-DOPA, a key Parkinson’s drug, giving old packaging a surprisingly high-value second life. With roughly 50 million tons of PET produced globally each year, this lab-scale breakthrough points to a future where biotech could slash waste, reduce fossil-based pharmaceutical production, and unlock new ways to make medicines, dyes, flavors, and more.

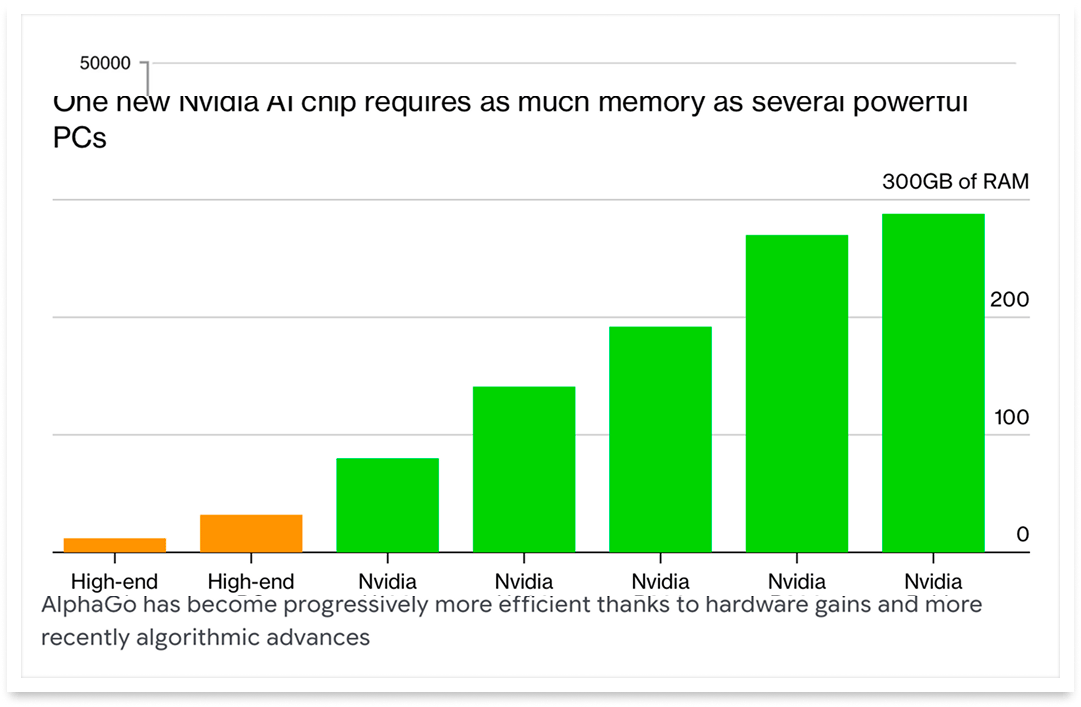

⚙️ Memory Shortage Extends Through 2030

SK Group Chairman Chey Tae-won said the global memory-chip shortage could last another four to five years, with wafer supply running more than 20% behind demand. SK hynix, Samsung, and Micron have shifted more production toward AI-focused memory, tightening supply of standard chips and raising pressure on prices, profits, and product plans across laptops, smartphones, cars, and data centers.

Andurils Palmer Luckey on AI, nuclear weapons and the war in Iran

The Mini Model That Rivals Giants

The Takeaway

👉 GPT-5.4 mini delivers near-flagship coding and reasoning performance at roughly one-third the cost, with over 2x the speed of its predecessor GPT-5 mini.

👉 GPT-5.4 nano targets developers running millions of daily inferences - classification, extraction, ranking - at just $0.20 per million input tokens.

👉 OpenAI is actively pushing multi-model architectures: large models plan and coordinate, while mini and nano subagents execute tasks in parallel at scale.

👉 Early adopters like GitHub Copilot, Notion, and Hebbia confirm mini matches or outperforms competitive models on output quality and citation recall at significantly lower cost.

Bigger isn't always better, and OpenAI just proved it. The company released GPT-5.4 mini and nano today, two compact models that deliver near-flagship intelligence at a fraction of the cost and more than twice the speed. GPT-5.4 mini scores 54.4% on SWE-Bench Pro, nearly matching the full GPT-5.4's 57.7%, while running dramatically faster. The nano variant goes even leaner at just $0.20 per million input tokens, targeting high-volume tasks like classification, extraction, and coding subagents.

The real shift here is architectural: OpenAI is pushing developers toward multi-model systems where a powerful GPT-5.4 handles planning and orchestration, while mini and nano workers execute smaller tasks in parallel. GitHub Copilot already ships with mini built in. Notion reports the smaller models now handle agentic tool calling reliably, a capability once reserved for premium models.

With a 400K context window and pricing that's roughly one-third of the flagship, GPT-5.4 mini positions itself as the workhorse behind the next wave of AI-native products.

Why it matters: Near-flagship AI performance is becoming commoditized, pushing the competitive frontier from raw intelligence toward speed, cost efficiency, and system design. But self-reported benchmarks and stacking API costs mean developers should test thoroughly before committing.

Sources:

🔗 https://openai.com/index/introducing-gpt-5-4-mini-and-nano//

Protect online privacy from the very first click

Your digital footprint starts before you can even walk.

In today’s data economy, “free” inboxes from Google and Microsoft, like Gmail and Outlook, are funded by data collection. Emails can be analyzed to personalize ads, train algorithms, and build long-term behavioral profiles to sell to third-party data brokers.

From family updates, school registrations, medical reports, to financial service emails, social media accounts, job applications, a digital identity can take shape long before someone understands what privacy means.

Privacy shouldn’t begin when you’re old enough to manage your settings. It should be the default from the start.

Proton Mail takes a different approach: no ads, no tracking, no data profiling — just private communication by default. Because the next generation deserves technology that protects them, not profiles them.

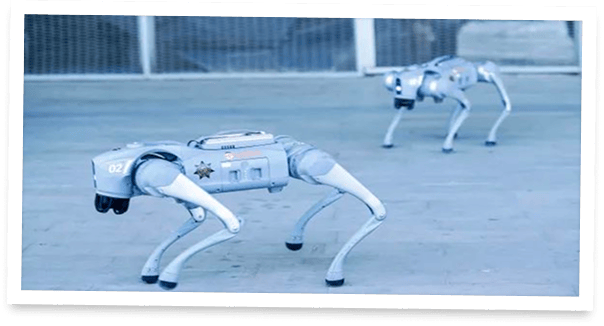

Robot Dogs Now Patrol AI Fortresses

The infrastructure powering AI just got its own four-legged security detail. Boston Dynamics and Ghost Robotics are deploying robot dogs (quadruped machines priced between $165,000 and $300,000) to patrol the massive data centers fueling the AI boom. With nearly $700 billion flowing into AI infrastructure and facilities stretching across dozens of acres, human guards simply can't cover every corner.

Enter Spot and Vision 60: tireless machines that detect thermal anomalies, spot leaks, map construction sites, and provide 24/7 surveillance without ever calling in sick. Novva Data Centers in Utah already runs a fleet of Spots across its 1.5 million square-foot campus. Ghost Robotics points to roughly 5,000 existing U.S. data centers, and up to a thousand more under construction, as a massive addressable market.

The economics are compelling: operators typically recoup the investment within 18 months. Neither company frames this as replacing humans. Instead, robot dogs augment security teams, acting as mobile sensors where fixed cameras can't reach.

The explosive growth of AI data centers is creating an entirely new commercial robotics market at scale.

Free email without sacrificing your privacy

Gmail tracks you. Proton doesn’t. Get private email that puts your data — and your privacy — first.