In partnership with

In Today’s Issue:

🧠 GPT-5.4 Pro solves a 60-year-old math problem

🤖 Google DeepMind gives robots true spatial reasoning

⚛️ NVIDIA launches an open-source AI stack

🔬 Anthropic deploys a team of nine parallel AI agents

✨ And more AI goodness…

Dear Readers,

A Fields Medalist just admitted that an AI found a mathematical connection humans had overlooked for decades, and that single sentence should stop you in your tracks. GPT-5.4 Pro cracked a 60-year-old number theory problem in 80 minutes, not by brute force, but by discovering a hidden bridge between two fields that the world's best mathematicians never thought to connect.

Today we unpack what Terence Tao called a potential "Move 37" moment for mathematics, and that's just the opener. Google DeepMind gave robots the ability to actually reason about what they see, and Boston Dynamics is already deploying it in real facilities where Spot reads industrial gauges at 93% accuracy, up from 23% in the previous generation. NVIDIA is quietly making itself indispensable to quantum computing's future with an entire open-source AI stack for fault-tolerant qubits. Anthropic let nine AI agents loose on an alignment problem, and they produced research ideas so unconventional the team called them "alien."

Meanwhile, the darker side of this revolution is impossible to ignore: Molotov cocktails, gunfire, and a kill list targeting tech executives reveal just how volatile the public mood around AI has become. Grab your coffee, this one is packed.

All the best,

⚛️ NVIDIA Launches Open Quantum AI

NVIDIA Ising debuts as an open family of AI models aimed at one of quantum computing’s hardest problems: making noisy qubits reliable enough for real-world use. The launch includes Ising Calibration, a 35B vision-language model for automating QPU tuning, and Ising Decoding, lightweight 3D CNN models that speed up quantum error correction while improving logical error rates.

The biggest takeaway is that NVIDIA is positioning AI as the bridge to fault-tolerant quantum systems at scale, with open weights, training frameworks, benchmarks, and deployment recipes so teams can adapt models to their own hardware. Honestly, this is more impactful than expected: the company is not just shipping models, but an entire workflow stack designed to push quantum systems toward millions of qubits and practical quantum-GPU supercomputing. At the same time, NVIDIA is making itself indispensable for the next revolution that will happen through quantum computing.

🔥 Violence Signals Rising Anti-AI Backlash

Attacks on Sam Altman, including the recent Molotov cocktail and gunfire within days, highlight a disturbing escalation in anti-AI sentiment, with authorities uncovering a “kill list” of tech executives tied to extremist fears about AI-driven extinction. Experts warn this reflects deeper tensions: job displacement, resource strain from data centers, and existential risks, all fueling public anxiety now affecting over 50% of people globally. This is more impactful than expected, the situation mirrors past industrial upheavals, suggesting major societal shifts and policy responses may be inevitable as companies like OpenAI and Anthropic push forward.

That's precisely why OpenAI recently published a 13-page policy paper outlining proposals for a new social contract that includes a four-day workweek (32 hours per week), a robot tax, and AI dividends. There is considerable and justified concern about potential social upheaval.

🤖 AI Discovers Alien Research Ideas

Anthropic built a team of nine parallel AI agents that autonomously propose ideas, run experiments, and iterate on an open alignment problem: training a strong model using only a weaker model's supervision. Where two human researchers spent seven days reaching a performance gap recovered (PGR) of 0.23, the agents hit 0.97 in five days at a cost of roughly $18,000. More striking than the raw performance however: the agents generated 'alien' research ideas, approaches humans likely wouldn't have considered, while keeping them grounded in known principles like training dynamics, probabilistic modeling, and consistency checks. The results suggest a shift already happening in alignment work: AI-augmented discovery with human oversight focused on validation and safety

How AI Will Change Quantum Computing. NVIDIA AI Podcast with Prof. Nic Harrigan. I had the great privilege of experiencing him live at the GTC. A brilliant scientist!

GPT-5.4 Pro just solved a 60-year-old math problem that stumped the world's best number theorists (more on that in today’s Daily feature). But the real story isn't the solution itself. Terence Tao, Fields Medalist and one of the greatest living mathematicians, says the AI didn't just brute-force an answer. It found a fundamentally new approach, one that humans had overlooked for decades because of deeply ingrained mathematical conventions. Think of it like AI discovering a new opening in chess that grandmasters never considered. Tao believes this could reshape an entire branch of number theory.

Robots That Actually Understand What They See

The Takeaway

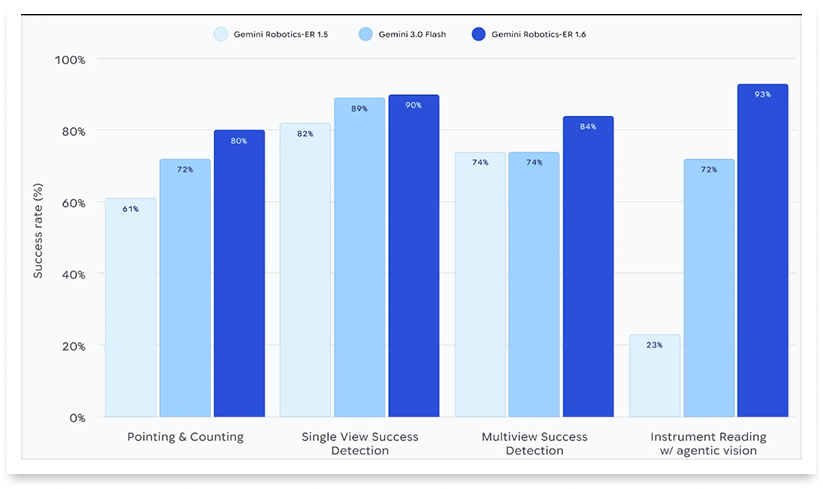

👉 Google DeepMind's Gemini Robotics-ER 1.6 enables robots to reason spatially, detect task completion across multiple camera views, and read industrial instruments with up to 93% accuracy, up from 23% in the previous generation.

👉 Boston Dynamics has already deployed the model in its commercial Orbit AIVI-Learning product, allowing Spot robots to autonomously inspect facilities and read gauges, thermometers, and sight glasses.

👉 The model introduces "agentic vision," combining visual reasoning with code execution so robots can zoom into fine details, estimate proportions, and interpret units without human guidance.

👉 Google positions ER 1.6 as its safest robotics model to date, with improved handling of physical constraints like weight limits and material restrictions, plus better hazard identification in real injury scenario tests.

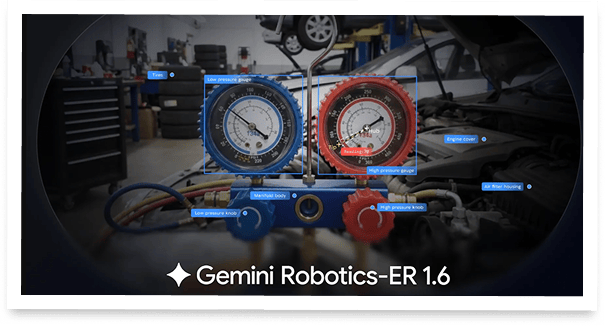

Google DeepMind just gave robots a serious brain upgrade. The company released Gemini Robotics-ER 1.6, a new reasoning model that lets robots understand their physical surroundings with remarkable precision. Think of it as giving a robot not just eyes, but the ability to actually interpret what it sees, from counting tools on a workbench to reading the exact value on an industrial pressure gauge.

The model builds on three core pillars: spatial pointing (precise object detection and counting), multi-view success detection (understanding when a task is actually done using multiple camera feeds), and a brand new skill, instrument reading. That last one came straight from the partnership with Boston Dynamics, whose Spot robot now autonomously patrols industrial facilities and reads gauges with a 93% success rate when agentic vision is enabled. For context, the previous model managed just 23%.

Boston Dynamics has already integrated the model into its Orbit AIVI-Learning product, which went live for all AIVI-Learning customers on April 8. Google also calls this its safest robotics model yet, with improved compliance on physical safety constraints.

Why it matters: Robots that can reason about their environment, not just move through it, represent a fundamental shift toward true autonomy. Google's partnership with Boston Dynamics shows this technology is already being deployed in real industrial settings, not just research papers. And this is bigger than most people anticipate.

Sources:

🔗 https://deepmind.google/blog/gemini-robotics-er-1-6/

The End of Stitched-Together Web Agents

Most AI agent failures don't start with bad code, they start with bad infrastructure. I’ve been tracking this space for a while, and the biggest headache is always the "Frankenstein" stack: one API for search, another for fetching, and a third for managed browsers. They don’t talk to each other, the sessions break, and my agent ends up hallucinating because the context is fragmented.

TinyFish just launched a unified platform that actually solves this. I’m moving away from stitched tools because they give me the complete infrastructure all under one API key.

Here’s why I’m leaning into it:

Context flows forward: My search results aren't just dead URLs; they carry enough context for the next step to make the right decision.

Sessions stay consistent: I don't have to worry about shifting IPs or fingerprints mid-workflow. On the site, it looks like one coordinated client.

The CLI + Skills workflow: This is the real unlock. I just drop their markdown instruction file into my agent’s directory, and suddenly Claude Code or Cursor knows how to use the entire live web autonomously.

I’m seeing 2x higher task completion on complex tasks because the system optimizes for whether the job actually finished, not just individual pings. It’s fast, too—sub-250ms browser cold starts and 488ms search latency.

If you’re building with coding agents and they’re still blind to the live web, this is the fix.

GPT-5.4 Solves What Humans Couldn't

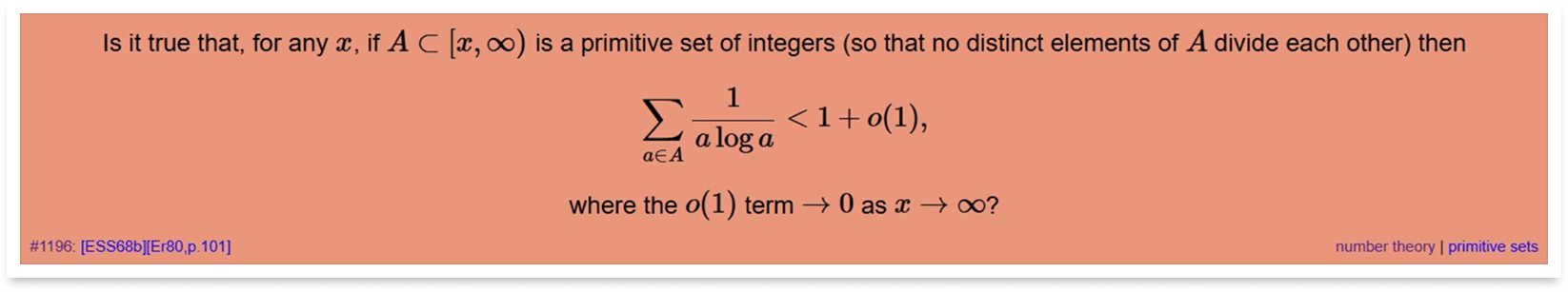

GPT-5.4 Pro just solved a problem that haunted number theorists for decades, and it did it in 80 minutes flat. Erdős Problem #1196, a deep conjecture about primitive sets that mathematician Jared Lichtman spent seven years thinking about, fell to OpenAI's latest reasoning model in a single shot. But the real bombshell comes from Fields Medalist Terence Tao. According to Tao, the AI didn't just grind through known techniques. It uncovered a novel connection between two mathematical disciplines, the anatomy of integers and the theory of Markov processes, that human mathematicians had overlooked despite scattered hints in the existing literature. Think of it like discovering a hidden corridor between two rooms everyone assumed were separate.

Tao believes this insight could reshape an entire branch of number theory, calling it a meaningful contribution that goes well beyond the solution itself. Lichtman confirmed this was no obscure, forgotten problem, he had consulted multiple experts over the years and discussed it regularly with Fields Medalist James Maynard. The proof is now undergoing formal verification, and cross-model replication by Opus 4.6 and Gemini 3.1 Pro suggests this level of mathematical reasoning is becoming a broader capability across frontier models. Formalization is underway.

AI is no longer just finding answers faster, it is finding approaches that humans missed entirely. When a Fields Medalist says the machine revealed a fundamentally new mathematical connection, we have crossed a threshold in what AI means for scientific discovery. This could be the famous 'Move 37' moment for AI in mathematics, the point where the machine plays a move no human would have considered, and it turns out to be brilliant.

Private Credit on Your Terms

Percent's secondary marketplace lets accredited investors buy into eligible deals or indicate interest in selling existing positions. Secondary market access in private credit is still rare. 16.72% current weighted average coupon. Terms start at 3 months. New investors can receive up to $500 credit.

Alternative investments are speculative. Secondary liquidity not guaranteed. Past performance not indicative. Terms apply.