Dear Readers,

Google just mass-democratized AI performance, and the price tag is almost laughable. Their new Gemini 3.1 Flash-Lite delivers speeds 2.5x faster than its predecessor at just $0.25 per million input tokens, proving that cutting-edge intelligence no longer demands a cutting-edge budget.

But that's just the opener today: Apple is fusing two 3nm dies into a single chip with the M5 Pro and M5 Max, rewriting what a laptop can do for AI workloads. OpenAI quietly shipped GPT-5.3 Instant with a 26.8% reduction in hallucinations, and Microsoft is betting Windows 12's future on an AI-first modular architecture that could lock out millions of current PCs.

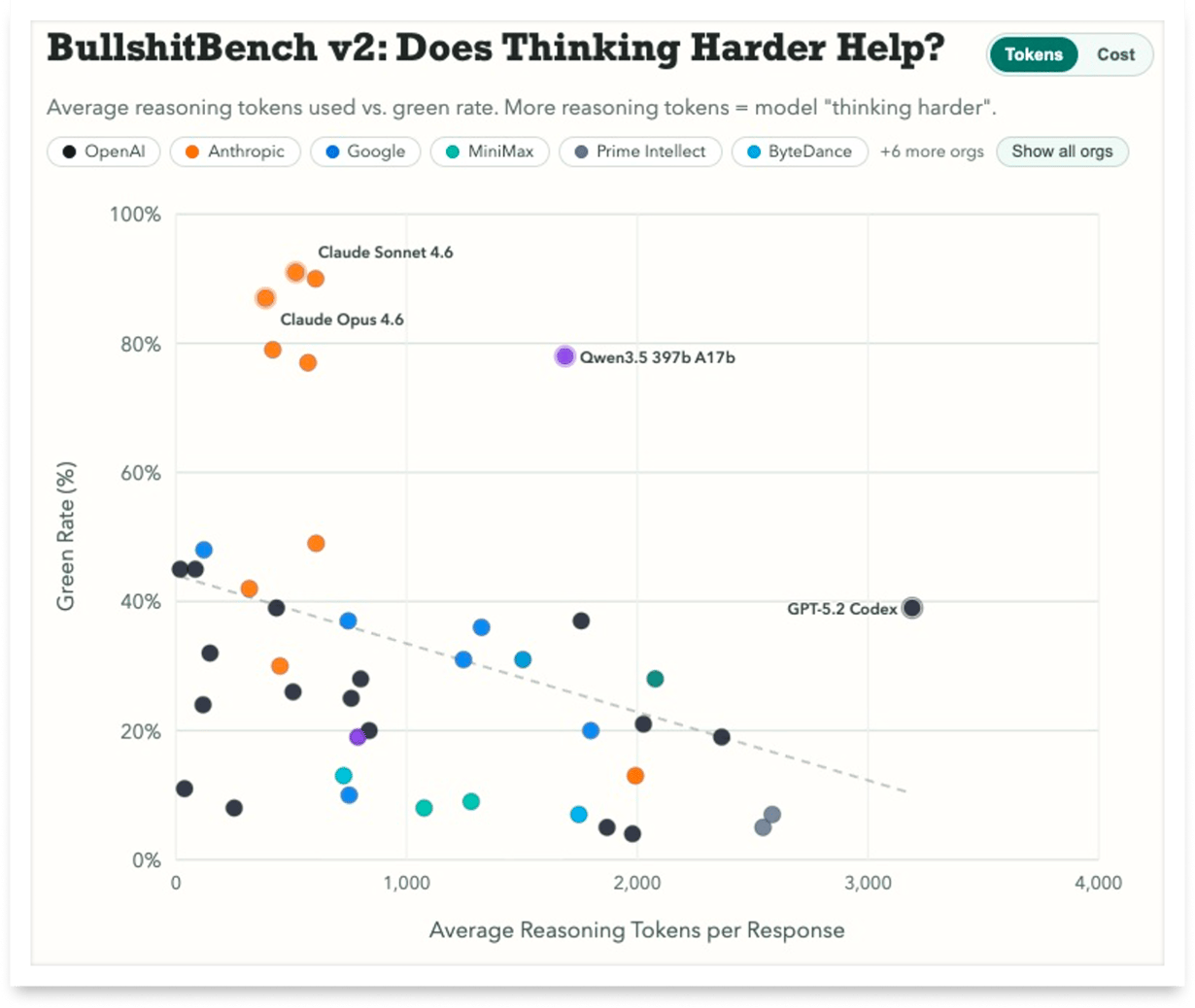

Meanwhile, a brilliantly simple benchmark called BullshitBench v2 reveals an uncomfortable truth: most AI models would rather confidently make things up than simply say "I don't know," and only a handful actually pass the test. Grab your coffee, this one's packed.

In Today’s Issue:

⚡ Google drops Gemini 3.1 Flash-Lite, a blazing-fast new model

💻 Apple merges two 3nm dies to create the M5 Pro and M5 Max

💬 OpenAI quietly ships GPT-5.3 Instant to slash ChatGPT hallucinations by 26.8%

🪟 Microsoft prepares AI-driven Windows 12 that could lock out millions of older PCs without a dedicated NPU

🛑 Most top-tier AI models would rather confidently hallucinate than admit they don't know the answer

✨ And more AI goodness…

All the best,

🚀 Apple Unleashes M5 Powerhouse Chips

Apple has introduced the M5 Pro and M5 Max, built on a new Fusion Architecture that merges two 3nm dies into a single SoC, unlocking up to 30% faster CPU performance and over 4x peak GPU compute for AI versus the previous generation. With an 18-core CPU (6 “super cores”), up to a 40-core GPU featuring Neural Accelerators, and unified memory bandwidth reaching 614GB/s, these chips are engineered for serious pro workflows, from 3D rendering to large language models.

For creators, developers, and AI researchers, this means up to 35% better ray tracing graphics, 2.5x multithreaded gains over the M1 Pro/Max, and configurations supporting up to 128GB unified memory, turning the new MacBook Pro into a next-level performance machine, available March 11.

🚀 GPT-5.3 Instant Improves ChatGPT Conversations

OpenAI has released GPT-5.3 Instant, an upgrade to ChatGPT’s most-used model that focuses on smoother conversations, fewer unnecessary refusals, and more accurate responses. The update reduces hallucinations by up to 26.8%, improves web-based answers by better synthesizing search results with the model’s knowledge, and removes overly cautious disclaimers that previously interrupted responses.

Overall, the goal is simple: make everyday interactions feel more natural, relevant, and helpful, with clearer answers, better writing ability, and a conversational tone that flows more smoothly for users. OpenAI has already announced the next major update to version 5.4.

🚀 Windows 12 AI-Driven Modular OS

Microsoft is reportedly preparing to launch Windows 12 later in 2026, built around a modular CorePC architecture that lets users customize the OS by adding or removing components for different needs like gaming or lightweight systems. The new system will focus heavily on AI integration through Copilot, potentially locking advanced AI features behind a subscription model and introducing visual upgrades like transparent UI elements and a floating taskbar.

A major catch: Windows 12 may require an NPU (Neural Processing Unit) for AI workloads, which could prevent millions of current PCs from upgrading, especially as Windows 10 support ends soon.

Cursor reportedly produced a new solution to “Problem Six” in the First Proof challenge, a research-level math benchmark comparable to work from Stanford, MIT, and Berkeley academics! Exciting times ahead.

Kim was a guest on another podcast again.

It's all in German, but feel free to turn on the subtitles and listen to this exciting podcast!

Google Drops a Speed Monster, And It Costs Almost Nothing

The Takeaway

👉 Google's Gemini 3.1 Flash-Lite delivers 2.5x faster response times and 45% higher output speed than its predecessor at a fraction of the cost, $0.25 per million input tokens makes it the most affordable model in the Gemini 3 series.

👉 Adjustable thinking levels give developers granular control over the speed-quality tradeoff, enabling cost optimization for high-volume tasks like translation and moderation while preserving reasoning power for complex workloads.

👉 With an Elo score of 1432 and benchmark results surpassing larger previous-generation Gemini models, Flash-Lite signals that the performance gap between "budget" and "premium" AI tiers is collapsing fast.

👉 Early adopters including Latitude, Cartwheel, and Whering report that Flash-Lite handles complex inputs with precision comparable to larger models - enterprise teams should evaluate whether their current model spend is still justified.

Google just made powerful AI absurdly affordable. The company launched Gemini 3.1 Flash-Lite, the fastest and cheapest model in its entire Gemini 3 lineup, and the benchmarks are turning heads. Priced at just $0.25 per million input tokens and $1.50 per million output tokens, this model delivers performance that used to require models costing eight times as much.

Here's what makes it exciting: Flash-Lite responds 2.5 times faster than its predecessor, Gemini 2.5 Flash, while also producing 45% more tokens per second. That's not an incremental upgrade, that's a generational leap. It scored 1432 Elo on the Arena.ai Leaderboard, beat larger Gemini models on reasoning benchmarks, and nailed 86.9% on GPQA Diamond. For a "Lite" model, it punches way above its weight.

What really sets it apart is adjustable thinking levels. Developers can dial reasoning up or down depending on the task: fast and cheap for content moderation, deeper for complex UI generation. Companies like Latitude, Cartwheel, and Whering are already running it in production.

Why it matters: Google's Flash-Lite proves that cutting-edge AI performance no longer requires cutting-edge budgets. This shift toward affordable, high-speed intelligence at scale could fundamentally reshape how startups and enterprises approach AI deployment, making sophisticated AI accessible to teams and use cases that were previously priced out.

Sources:

🔗 https://blog.google/innovation-and-ai/models-and-research/gemini-models/gemini-3-1-flash-lite/

All the essential API, platform, and AI reads delivered straight to your inbox every Tuesday.

AI Models Can't Say "No"

Most AI models have a people-pleasing problem, and now there's data to prove it. BullshitBench v2, created by Peter Gostev, is a benchmark that does something refreshingly different: it tests whether AI models can detect and reject nonsensical prompts instead of confidently rolling with them. Think of it as a BS detector for AI.

The v2 update brings 100 new questions across five domains: coding, medical, legal, finance, and physics, with over 70 model variants tested. The questions are cleverly designed to sound authoritative while being completely made up. Imagine asking a model about measuring "milliempathies" in nurses or calculating the "activation energy" of a non-compete clause. Sounds legit, right? That's the trap.

The results are striking: only Anthropic's Claude models and Alibaba's Qwen 3.5 score meaningfully above 60% on nonsense detection. OpenAI and Google? Stuck, and not improving. Even more surprising: reasoning models that "think harder" actually perform worse. They use their extra compute to rationalize the nonsense rather than reject it.

BullshitBench v2 is one of the few benchmarks where most models are not getting better over time, exposing a fundamental gap between appearing smart and being reliable.

The Year-End Moves No One’s Watching

Markets don’t wait — and year-end waits even less.

In the final stretch, money rotates, funds window-dress, tax-loss selling meets bottom-fishing, and “Santa Rally” chatter turns into real tape. Most people notice after the move.

Elite Trade Club is your morning shortcut: a curated selection of the setups that still matter this year — the headlines that move stocks, catalysts on deck, and where smart money is positioning before New Year’s. One read. Five minutes. Actionable clarity.

If you want to start 2026 from a stronger spot, finish 2025 prepared. Join 200K+ traders who open our premarket briefing, place their plan, and let the open come to them.

By joining, you’ll receive Elite Trade Club emails and select partner insights. See Privacy Policy.