In Today’s Issue:

📱 Codex comes to ChatGPT mobile

🧬 Anthropic puts $200M behind public-interest AI

⚖️ OpenAI and Apple edge toward a platform fight

🛡️ Supply-chain malware hits OpenAI employee devices

📊 The cost of AI data center

✨ And more AI goodness…

⚡ The Signal

AI is moving from app to operating rhythm.

The biggest moves today are not about new models. They are about where AI shows up and who gets to use it. OpenAI is embedding Codex into the mobile loop so developers never leave the agent workflow. Anthropic is betting $200 million that AI should reach health workers and smallholder farmers, not just SaaS buyers. And behind the scenes, the Apple partnership that was supposed to bring ChatGPT to billions is fraying into legal memos. The pattern is clear: the race is no longer about who builds the smartest model. It is about who turns capability into daily infrastructure, and whose distribution bet actually holds.

All the best,

Kim Isenberg

🧬 Anthropic and Gates Foundation Commit $200M to Public-Interest AI

Anthropic and the Bill & Melinda Gates Foundation announced a four-year, $200 million partnership targeting global health, education, and economic mobility. Anthropic contributes Claude credits and engineering support; the Gates Foundation provides grant funding. The work spans vaccine screening for polio and HPV, literacy apps in sub-Saharan Africa and India, agricultural tools for smallholder farmers, and new public-goods datasets for African languages where most AI models still fall short.

👉 tl;dr: Anthropic is spending real resources to prove that frontier AI can serve populations that market economics alone will not reach. The $200M dwarfs OpenAI's $50M Gates deal from January.

⚖️ OpenAI Prepares Legal Options Against Apple

Bloomberg reports that OpenAI's lawyers are working with an outside firm on options that could include a breach-of-contract notice to Apple. The two-year-old partnership, which wove ChatGPT into Siri and Apple Intelligence, reportedly fell short of OpenAI's subscriber and integration expectations. Apple is now opening iOS to Gemini, Claude, and other models ahead of WWDC, while OpenAI is simultaneously in trial with Elon Musk and navigating tensions with Microsoft.

👉 tl;dr: The deal that was supposed to give ChatGPT a billion-device distribution channel is unraveling. OpenAI may send a legal notice, but both sides still hope to resolve it outside of court.

🛡️ Supply-Chain Malware Hits OpenAI Employee Devices

OpenAI confirmed that two employees' devices were compromised through the TanStack npm supply-chain attack, part of the broader Shai-Hulud malware campaign. Attackers published 84 malicious package versions in a six-minute window, targeting credentials and code-signing certificates. OpenAI says no user data or production systems were accessed, but it is rotating macOS certificates as a precaution, requiring app updates before June 12. Microsoft and Mistral AI reported similar incidents from the same campaign.

👉 tl;dr: OpenAI contained the damage, but the broader supply-chain problem is accelerating. If the malware had landed a few minutes later or on different machines, the outcome could have been far worse.

Use AI to turn a tool you already pay for into a workflow you actually use.

Why it helps: Today's issue shows AI moving into phones, health systems, and legal disputes. The common thread is integration: the value is not in the tool itself, but in whether it connects to how you already work. Most people pay for apps and services they barely touch. AI can close that gap.

Try this: "I pay for [tool/subscription/app]. List the three features I am probably not using, explain what each one actually does in plain language, and give me a one-sentence instruction for trying each one this week."

🎬 Watch This

Codex for the Workplace: AI Agents Beyond Coding

Why it’s worth your time:

OpenAI's Thibault Sottiaux, head of Codex, explains how the tool is expanding beyond software engineering into research, planning, data analysis, and presentations. This is the clearest signal that OpenAI sees Codex as a general-purpose work agent.

Best bit:

The discussion of what happens when non-developers start using an agent originally built for engineers, and how that changes team workflows.

Watch if you care about:

AI agents / knowledge work / Codex beyond coding / enterprise adoption

"Apple has so much market power that they can dictate terms. We already took this leap of faith with you, and it didn't work out well."

Apple reportedly plans to let iOS 27 users choose from multiple AI models, including Anthropic Claude and Google Gemini alongside ChatGPT, according to Bloomberg. Gemini is expected to power a revamped Siri at WWDC. OpenAI was reportedly offered participation in the multi-model framework but declined, citing its experience with the original partnership. The move would make Apple's AI layer a marketplace rather than a single-vendor feature, which changes the distribution math for every frontier lab.

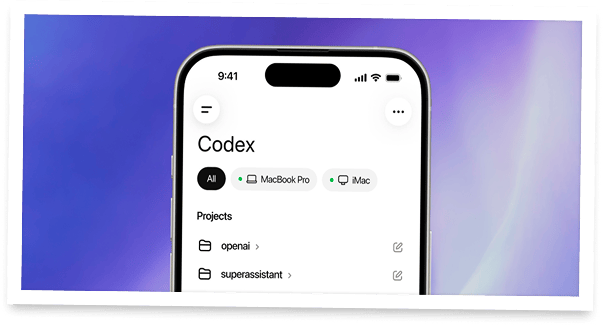

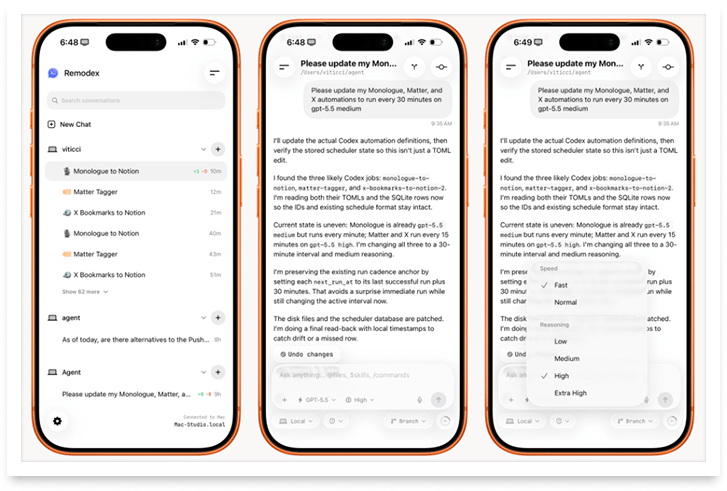

Codex Goes Everywhere

The Takeaway

👉 OpenAI ships Codex inside the ChatGPT mobile app on iOS and Android, free across all plans including Free and Go.

👉 The mobile app connects to any machine where Codex runs, loading live state including threads, plugins, and project context.

👉 Remote SSH goes generally available, letting enterprises connect Codex to managed environments with approved dependencies and security policies.

👉 Codex now has more than 4 million weekly users, up from 2 million in March.

OpenAI is turning its coding agent into a continuous, multi-device workflow. The Codex mobile preview, announced Thursday, lets developers monitor running tasks, approve agent actions, review diffs, start new prompts, and switch models from their phone. The app syncs with whatever machine Codex is running on: a laptop, a Mac mini, a cloud devbox, or a remote enterprise environment.

The design philosophy is clear: coding agents increasingly run long background tasks, and developers need to step in at short, unpredictable moments. Keeping them tethered to a desk for those moments does not scale. The mobile app is OpenAI's fix for that workflow gap, and by making it available to every plan including the free tier, the company is optimizing for adoption breadth rather than revenue gating.

The enterprise side is just as important. Remote SSH is now generally available, which means Codex can connect to managed machines with pre-approved dependencies, credentials, and security policies. OpenAI also shipped programmatic access tokens for CI pipelines and release automation, plus expanded HIPAA-eligible access. Together, these moves turn Codex from a developer tool into an infrastructure layer that sits inside the enterprise security perimeter.

Why it matters: This is important because coding agents are moving from experimental tool to operational infrastructure. When an agent runs continuously across devices and environments, with enterprise-grade access controls and audit trails, it stops being a productivity feature and starts becoming a platform play. The race is now about who builds the deepest integration into how teams already work.

The IT strategy every team needs for 2026

2026 will redefine IT as a strategic driver of global growth. Automation, AI-driven support, unified platforms, and zero-trust security are becoming standard, especially for distributed teams. This toolkit helps IT and HR leaders assess readiness, define goals, and build a scalable, audit-ready IT strategy for the year ahead. Learn what’s changing and how to prepare.

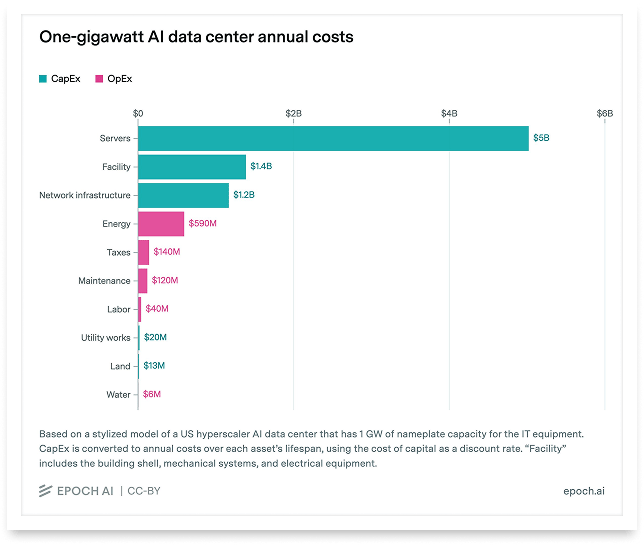

The cost of AI data center

The chart: Epoch AI tracks five hyperscaler campuses on track to reach 1 GW of facility power in 2026. xAI Colossus 2 targets 12 months to gigawatt scale; AWS Project Rainier for Anthropic appears first to hit the mark. Total AI data center power reached roughly 30 GW by late 2025.

The lesson: Build timelines are compressing from years to months. At roughly $29 billion per gigawatt, the companies that secure power and permitting fastest are building structural advantages that are hard to replicate.

The caveat: Planned timelines, not confirmed completions. Satellite imagery and permit data constrain precision, and some campuses may include non-AI workloads in their totals.

Anthropic Lays Out the US-China AI Race in Two Scenarios

⚡ Bottom line: Anthropic published a policy paper arguing that the US must lock in a 12 to 24 month AI lead over China by 2028, when transformative systems are expected to arrive.

💡 Why it matters: The paper frames AI competition not as a market question but as a governance question: whoever sets the frontier shapes the rules for how AI is deployed, including whether it enables automated repression or democratic accountability.

🔎 What it means: Anthropic is making an explicit geopolitical case for tighter export controls, disrupting distillation attacks, and accelerating democratic AI adoption, while also calling for safety dialogue with Chinese researchers.

Anthropic is making an explicit geopolitical case for tighter export controls, disrupting distillation attacks, and accelerating democratic AI adoption, while also calling for safety dialogue with Chinese researchers.

The paper is unusually direct for an AI lab. It names DeepSeek models deployed by the PLA, cites CAISI evaluations showing Chinese models comply with 94% of malicious requests under jailbreaking, and argues that open-weight releases from Chinese labs create uncontrollable proliferation risks. Anthropic also stresses it supports safety engagement with China's AI community, but only from a position of strength.

Anthropic says that time is running out. By 2028, they expect models that will surpass everything we have ever seen. And by then, they say, Western democracy must prove superior to the Chinese. The clock is ticking.

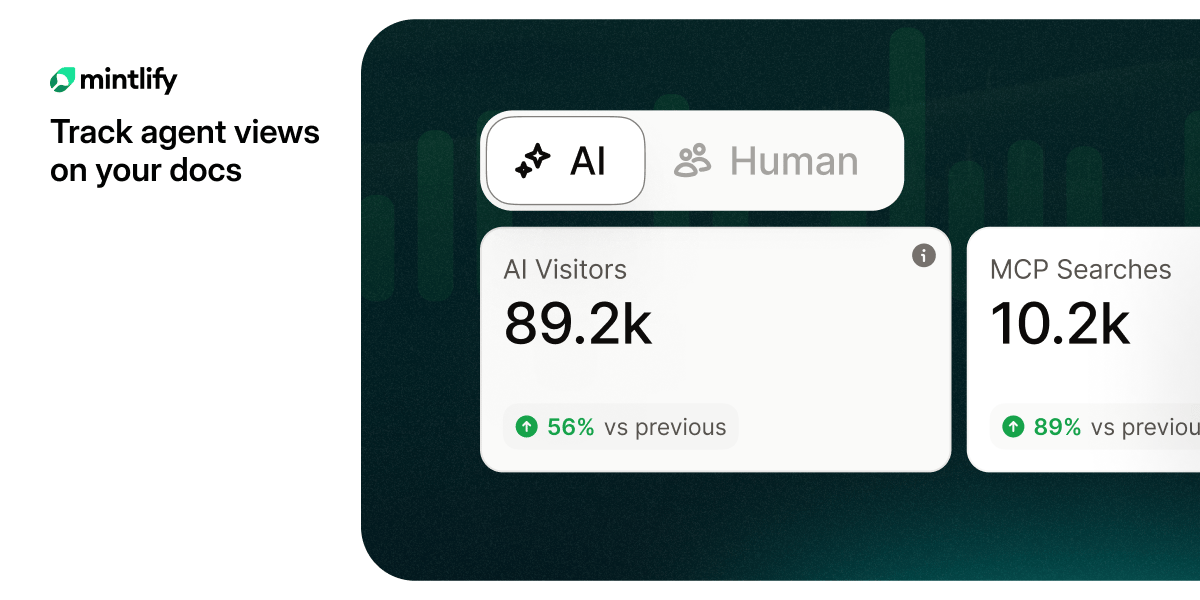

Are you tracking agent views on your docs?

AI agents already outnumber human visitors to your docs — now you can track them.