In Today’s Issue:

🕵️ Anthropic exposes a massive, coordinated AI model theft by Chinese labs

💻 A U.S. official confirms that DeepSeek trained its latest model on smuggled Nvidia Blackwell chips

🤖 Australian robot swarms are fighting fires with near-perfect accuracy

🚨 DeepMind's Demis Hassabis warns that AI safety research is failing

✨ And more AI goodness…

Dear Readers,

Today, Anthropic pulled back the curtain on something the AI industry has whispered about for months: coordinated, industrial-scale theft of frontier model capabilities by Chinese labs. DeepSeek, Moonshot AI, and MiniMax reportedly ran 24,000 fake accounts and 16 million extraction queries against Claude. The uncomfortable twist is that Anthropic itself recently settled a $1.5 billion copyright suit for training on pirated books, so the moral lines here are anything but clean.

Speaking of DeepSeek, the hits keep coming: a senior U.S. official confirmed the company trained its latest model on Nvidia's banned Blackwell chips smuggled into an Inner Mongolia data center, while markets are already bracing for a "DeepSeek Part Two" shock that could rattle the Nasdaq just as it did in January 2025.

On a brighter note, robot swarms in Australia are putting out fires with a 99.67% success rate, and a tiny San Francisco startup called Standard Intelligence just proved that AI can learn to use computers by watching 11 million hours of screen recordings. Meanwhile, Demis Hassabis is sounding the alarm on AI safety research that isn't moving fast enough. Grab your coffee and dig in.

All the best,

🔥 Robot Swarm Masters Firefighting

AI-powered firefighting robots in Australia achieved a stunning 99.67% success rate in early trials, autonomously navigating obstacles and extinguishing multiple fires using multi-agent reinforcement learning (MARL). Developed by Cyborg Dynamics Engineering and Griffith University, the system trains robots through a three-stage curriculum, progressing from solo navigation to fully coordinated swarm firefighting.

The breakthrough means safer fire response, reduced risk to human crews, and faster, sensor-driven decisions in high-risk zones like mines, paving the way for autonomous ground, aerial, and even underwater emergency swarms.

📉 DeepSeek’s New Model Shakes Markets

China’s DeepSeek is preparing to launch a new AI model (DeepSeek v4), and markets are bracing for turbulence, especially on the Nasdaq. When the company unveiled its open-source model in January 2025, the Nasdaq plunged 3%, Nvidia dropped nearly 17%, and the VanEck Semiconductor ETF (SMH) sank almost 10%, highlighting how disruptive low-cost, high-performance AI breakthroughs can be to U.S. tech dominance narratives.

With Nvidia earnings looming, Trump’s evolving tariff agenda, and geopolitical tensions in play, investors face a volatile mix. While JPMorgan maintains a tactically bullish stance on megacap tech, a “DeepSeek Part Two” shock could again rattle chipmakers and AI-linked stocks, making this a high-stakes moment for markets.

🚨 DeepSeek Trained On Banned Chips

Chinese AI startup DeepSeek reportedly trained its upcoming model on Nvidia’s top-tier Blackwell chips, despite U.S. export controls banning their shipment to China. A senior U.S. official said the chips were likely clustered in an Inner Mongolia data center and that DeepSeek may attempt to erase technical traces of their use, raising fresh national security and compliance concerns.

The revelation intensifies Washington’s AI chip battle, as policymakers debate whether allowing sales of slightly downgraded H200 chips could strengthen or restrain China’s AI ambitions, especially given DeepSeek’s prior market-shaking models and alleged reliance on “distillation” from leading U.S. AI firms.

Urgent research needed to tackle AI threats, says Demis Hassabis

Anthropic Exposes Industrial-Scale AI Theft

The Takeaway

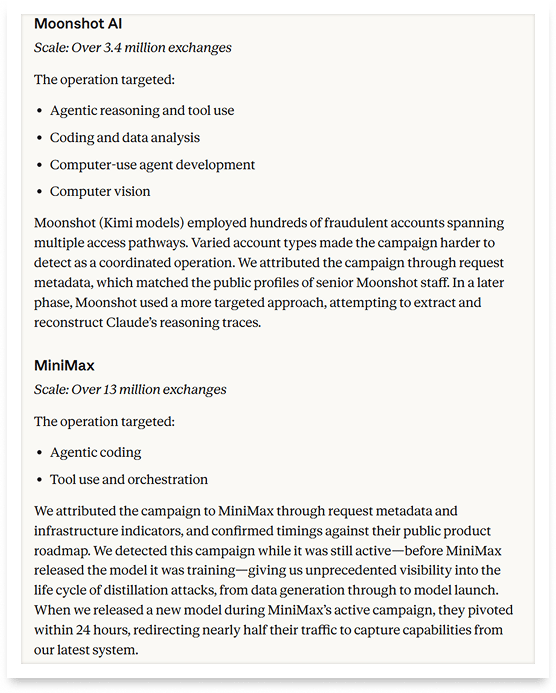

👉 Anthropic identified coordinated distillation campaigns by DeepSeek, Moonshot AI, and MiniMax that generated over 16 million exchanges through 24,000 fake accounts, the most detailed public evidence of industrial-scale AI capability theft to date.

👉 Distilled models lack the safety guardrails built into frontier systems, creating direct national security risks, from offensive cyber operations to mass surveillance by authoritarian regimes.

👉 The rapid "progress" of Chinese AI labs is partly built on capabilities extracted from American models, reinforcing the case for export controls rather than undermining it.

👉 No single company can defend against this alone, Anthropic is calling for coordinated action across AI labs, cloud providers, and policymakers to close the proxy loopholes and strengthen verification systems.

Anthropic just dropped a bombshell that shakes the entire AI industry to its core. The company revealed that three Chinese AI labs—DeepSeek, Moonshot AI, and MiniMax—ran coordinated, industrial-scale campaigns to steal Claude's capabilities. How? Through a technique called "distillation," where a weaker model learns by copying the outputs of a stronger one. Think of it as an AI student secretly photographing every answer on the smartest kid's exam - 16 million times, across 24,000 fake accounts.

The numbers are staggering. MiniMax alone racked up over 13 million exchanges, Moonshot hit 3.4 million, and DeepSeek generated 150,000, all targeting Claude's crown jewels: agentic reasoning, coding, and tool use. These labs used sprawling proxy networks to bypass Anthropic's China access restrictions, with one single proxy managing over 20,000 fraudulent accounts simultaneously. When Anthropic released a new model, MiniMax pivoted within 24 hours to extract its capabilities. OpenAI and Google have reported similar attacks on their own models.

But here's where it gets uncomfortable: Anthropic's moral high ground isn't exactly solid rock. The company settled a landmark $1.5 billion copyright lawsuit last year after a court found it had downloaded over seven million pirated books from sites like LibGen and Pirate Library Mirror to train Claude. And that's not all - music publishers led by Universal Music Group and Concord filed a fresh $3 billion lawsuit in January 2026, accusing Anthropic of illegally torrenting more than 20,000 copyrighted songs.

Critics are quick to point out the irony: a company calling out others for stealing its AI outputs built its own models, at least in part, on content it didn't have the right to use. That tension makes this story far more nuanced than a simple good-vs-evil narrative, and the AI community should pay attention to both sides.

Why it matters: Illicit distillation lets foreign competitors leapfrog years of research and billions in investment overnight - while stripping away the safety measures designed to prevent AI misuse. This revelation fundamentally challenges assumptions about how quickly the global AI gap is really closing and strengthens the case for tighter export controls and industry-wide coordination.

Sources:

🔗 https://www.anthropic.com/news/detecting-and-preventing-distillation-attacks

Meet America’s Newest $1B Unicorn

A US startup just hit a $1 billion private valuation, joining billion-dollar private companies like SpaceX, OpenAI, and ByteDance. Unlike those other unicorns, you can invest.

Over 40,000 people already have. So have industry giants like General Motors and POSCO.

Why all the interest? EnergyX’s patented tech can recover up to 3X more lithium than traditional methods. That's a big deal, as demand for lithium is expected to 5X current production levels by 2040. Today, they’re moving toward commercial production, tapping into 100,000+ acres of lithium deposits in Chile, a potential $1.1B annual revenue opportunity at projected market prices.

Right now, you can invest at this pivotal growth stage for $11/share. But only through February 26. Become an early-stage EnergyX shareholder before the deadline.

This is a paid advertisement for EnergyX Regulation A offering. Please read the offering circular at invest.energyx.com. Under Regulation A, a company may change its share price by up to 20% without requalifying the offering with the Securities and Exchange Commission.

AI Learns by Watching

A small San Francisco startup just quietly changed the rules for how AI agents interact with computers. Standard Intelligence unveiled FDM-1, a foundation model trained on a staggering 11-million-hour video dataset of screen recordings, making it the first AI that learns computer use the way humans do: by watching.

Here's what makes this special. Until now, building a computer-use agent meant painstakingly teaching AI through annotated screenshots - expensive, slow, and limited to a few seconds of context. FDM-1 flips the script entirely. Its custom video encoder compresses nearly two hours of 30 FPS video into just one million tokens, that's 100x more efficient than OpenAI's encoder. The result? An AI that can handle long, complex workflows like 3D modeling in Blender, navigating websites, finding software bugs -and even driving a car in San Francisco after less than one hour of fine-tuning data.

The secret sauce is a three-stage training recipe. First, an inverse dynamics model learns to label what actions produced specific screen changes - like figuring out that a "K" appeared because someone pressed the K key. Then that model auto-labels the massive video dataset. Finally, FDM-1 trains on those labeled videos to predict the next action. No chain-of-thought reasoning, no screenshots, just raw video and actions at 30 frames per second.

FDM-1 shifts computer action from a data-constrained problem to a compute-constrained one. Just as GPT-3 needed an internet-scale text corpus to unlock language understanding, FDM-1 proves that computer use needs an internet-scale video corpus. And that corpus already exists, millions of hours of coding livestreams, CAD tutorials, and video game playthroughs sitting on the internet, waiting to be learned from.

Stop Drowning In AI Information Overload

Your inbox is flooded with newsletters. Your feed is chaos. Somewhere in that noise are the insights that could transform your work—but who has time to find them?

The Deep View solves this. We read everything, analyze what matters, and deliver only the intelligence you need. No duplicate stories, no filler content, no wasted time. Just the essential AI developments that impact your industry, explained clearly and concisely.

Replace hours of scattered reading with five focused minutes. While others scramble to keep up, you'll stay ahead of developments that matter. 600,000+ professionals at top companies have already made this switch.