In Today’s Issue:

🕵️ Anthropic accidentally revealed "Claude Mythos" and a powerful new "Capybara" tier that excels in cybersecurity

⚖️ A federal judge temporarily blocked the Pentagon's attempt to blacklist Anthropic

🗣️ Mistral dropped an open-weight voice model that beats ElevenLabs

🌍 Google launched Gemini 3.1 Flash Live

✨ And more AI goodness…

Dear Readers,

Anthropic didn't plan on making news this week, but a forgotten privacy toggle just exposed Claude Mythos, their most powerful model yet, complete with a new "Capybara" tier that reportedly blows past every existing AI system in cybersecurity.

The irony of a safety-first company leaking its own secrets is almost too perfect, and we've got the full breakdown for you today. But that's far from the only headline: a federal judge just blocked the Pentagon's attempt to blacklist Anthropic over its refusal to build autonomous weapons, Mistral dropped an open-weight voice model that's beating ElevenLabs in human preference tests, Google's Gemini 3.1 Flash Live is pushing real-time voice AI into 200+ countries, and scientists may have just shattered the theoretical ceiling on solar energy efficiency.

Plus, we're looking at Symbolica's stunning ARC-AGI-3 benchmark run and xAI's Grok video model gaining serious traction — let's get into it.

All the best,

Mistral's Open-Weight Voice Play 🗣️

Mistral just dropped Voxtral TTS, a 3B-parameter text-to-speech model with open weights, and it's surprisingly competitive. In human preference tests, it beat ElevenLabs Flash v2.5 about 63% of the time on standard voices and nearly 70% on voice cloning.

The model runs on ~3GB of RAM, delivers first audio in 90ms, supports nine languages, and can clone a voice from just five seconds of audio, accent included, even cross-lingually.

The bigger play here is enterprise. Mistral is betting that companies in finance and healthcare would rather own their voice AI than pipe sensitive audio through a third-party API. Voxtral slots into a full speech-to-speech stack alongside Mistral's transcription model, LLMs, and its Forge platform, a credible one-stop-shop pitch.

Next up: a fully end-to-end audio model that picks up on tone, emotion, and conversational rhythm.

🚀 Gemini Flash Live Elevates Voice AI

Google’s Gemini 3.1 Flash Live introduces faster, more natural real-time voice interactions, with major gains in reasoning, tone recognition, and multilingual support across 200+ countries. It scores up to 90.8% on complex audio benchmarks and enables longer, more coherent conversations, making it powerful for both developers building voice agents and everyday users.

With built-in SynthID watermarking to detect AI-generated audio, this release pushes voice AI toward more reliable, human-like, and globally accessible experiences. Lower latency makes conversations even more human, so I believe the application will become more widespread, meaning we will be speaking more with an LLM in the future.

⚡ Solar Efficiency Barrier Finally Broken

Scientists from Kyushu University and Johannes Gutenberg University Mainz have developed a breakthrough solar technology using “spin-flip” materials and singlet fission to achieve ~130% energy conversion efficiency, surpassing the long-standing Shockley–Queisser limit. By converting one photon into multiple energy carriers and minimizing losses, this approach could dramatically boost how much sunlight solar cells can harness.

If scaled into real-world systems, this innovation could redefine solar power, accelerate clean energy adoption, and even unlock new applications in LEDs and quantum technologies.

Symbolica's Agentica SDK just hit 36.08% on the brand-new ARC-AGI-3 benchmark - in a single day - at a fraction of what brute-forcing it with a frontier model would cost. What makes this especially striking is the efficiency angle: their agentic approach dramatically outperformed throwing raw compute at the problem. It's a strong early signal that smart orchestration beats raw model power on novel reasoning tasks.

NVIDIA GTC 2026 Open Models Panel Highlights with Jensen Huang

Claude Mythos:

Accidental Drop of the Year

The Takeaway

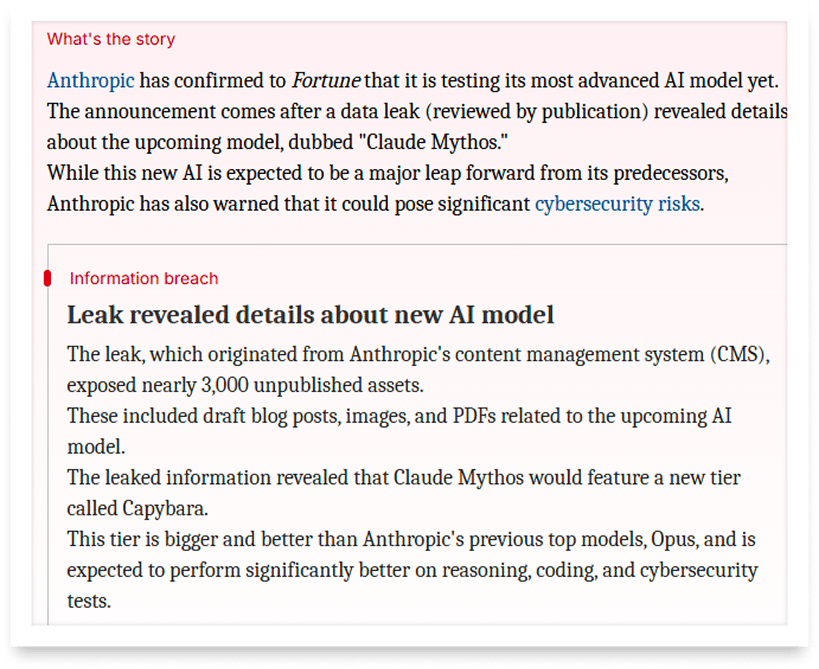

👉 A misconfigured content management system exposed nearly 3,000 unpublished Anthropic assets, including a draft announcing Claude Mythos - confirmed by Anthropic as a "step change" in AI performance, currently in early access testing.

👉 Mythos reportedly introduces a new model tier called Capybara, sitting above Opus in capability and cost, with dramatically higher benchmark scores in reasoning, coding, and cybersecurity - the latter described internally as surpassing all existing AI systems.

👉 Due to its advanced cyber capabilities, Anthropic plans a highly controlled rollout, initially limited to cybersecurity defenders - signaling the company's own concern about the model's dual-use potential.

👉 The incident exposes a critical irony: a company that champions AI safety and warns of state-sponsored cyberattacks left sensitive internal data accessible via a default public setting - a reminder that operational security gaps are a structural risk across the entire industry.

Anthropic didn't mean to make headlines this week, but a forgotten privacy toggle just changed everything. The company accidentally left nearly 3,000 unpublished assets sitting in a publicly accessible data store, including what appears to be a draft blog post announcing their next major model: a system called Claude Mythos, described internally as the most capable model Anthropic has ever built, currently being tested with a select group of early access customers.

The leak stemmed from a surprisingly mundane mistake. All assets uploaded to Anthropic's content management system are set to public by default — unless someone explicitly changes that setting. A cybersecurity researcher at Cambridge who reviewed the materials found close to 3,000 blog-linked files that were never meant to be public.

What makes this genuinely exciting beyond the drama: Claude Mythos is said to introduce a new model tier called Capybara — positioned above Opus — with dramatically stronger performance in reasoning, coding, and cybersecurity. A draft blog post from Anthropic warned that the model is "currently far ahead of any other AI model in cyber capabilities," and that the company plans to release it first to cyber defenders to help fortify codebases.

The accidental reveal also surfaced details of an invite-only retreat for European CEOs at an 18th-century English manor, where Dario Amodei is set to demo unreleased Claude capabilities.

Why it matters: Anthropic's data leak inadvertently confirmed that the next generation of AI models will be dramatically more capable in cybersecurity — potentially outpacing existing defenses. The fact that even safety-focused AI labs face basic operational security failures raises urgent questions about governance, transparency, and the pace of deployment.

Sources:

🔗 https://m1astra-mythos.pages.dev/

🔗 https://www.newsbytesapp.com/news/science/claude-mythos-ai-model-testing-confirmed-by-anthropic/story

What do these names have in common?

Arnold Schwarzenegger

Codie Sanchez

Scott Galloway

Colin & Samir

Shaan Puri

Jay Shetty

They all run their businesses on beehiiv. Newsletters, websites, digital products, and more. beehiiv is the only platform you need to take your content business to the next level.

🚨Limited time offer: Get 30% off your first 3 months on beehiiv. Just use code JOIN30 at checkout.

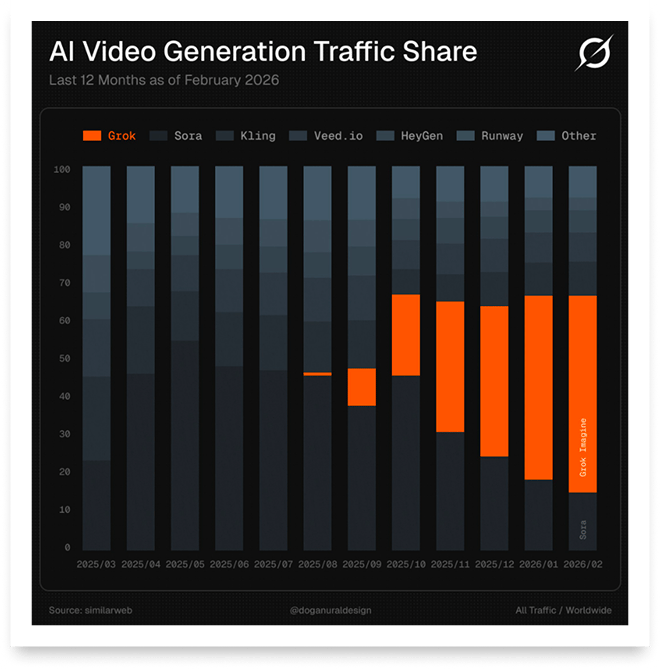

xAI's Grok is gaining increasing popularity and more people are using the video model.

Court Defends Anthropic Against Pentagon

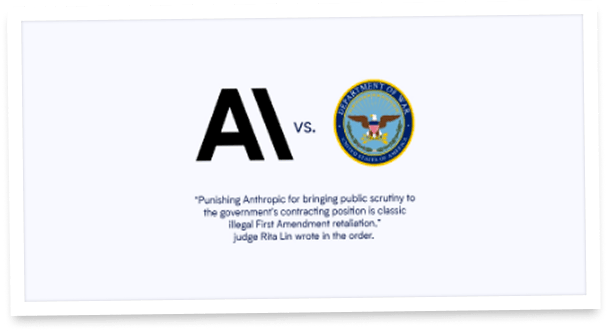

A federal court just drew a line in the sand, and it's one the entire tech industry has been watching. A federal judge in California has indefinitely blocked the Pentagon's effort to label Anthropic a "supply chain risk," ruling that those measures ran roughshod over the company's constitutional rights.

(Image: The Pentagon)

We remember: Anthropic was blacklisted by President Trump and Defense Secretary Pete Hegseth in February after refusing to allow the Pentagon to use its Claude AI model for autonomous lethal warfare and the mass surveillance of Americans.

The Department of Defense's chief technology officer argued the company's guardrails were "polluting the supply chain" for warfighters. But Judge Rita Lin wasn't buying it. She wrote that the measures "appear designed to punish Anthropic" rather than serve any genuine national security interest, calling it "classic illegal First Amendment retaliation."

Supporters filing legal briefs in Anthropic's favor included Microsoft, industry trade groups, retired U.S. military leaders, and even a group of Catholic theologians.

The ruling is temporary, and a parallel case is still ongoing in a D.C. court — meaning this fight is far from over. But the message is clear: can the principle of responsible AI development survive in a geopolitical environment that treats safety guardrails as a liability?

Stop typing prompts. Start talking.

You think 4x faster than you type. So why are you typing prompts?

Wispr Flow turns your voice into ready-to-paste text inside any AI tool. Speak naturally - include "um"s, tangents, half-finished thoughts - and Flow cleans everything up. You get polished, detailed prompts without touching a keyboard.

Developers use Flow to give coding agents the context they actually need. Researchers use it to describe experiments in full detail. Everyone uses it to stop bottlenecking their AI workflows.

89% of messages sent with zero edits. Millions of users worldwide. Available on Mac, Windows, iPhone, and now Android (free and unlimited on Android during launch).