In partnership with:

In Today’s Issue:

📉 Nvidia's grip on the Chinese AI chip market has plummeted

🗜️ PrismML successfully compressed an 8.2B-parameter LLM into a 1.15 GB package

🎈 A severe global helium shortage triggered by Iran conflict threatens to disrupt the cooling systems

🥗 The American Heart Association directly challenges the Trump administration's dietary guidelines

✨ And more AI goodness…

Dear Readers,

Today, Alibaba dropped Qwen3.6-Plus, and the benchmarks tell a story that should make every frontier lab nervous: the gap between Chinese and American AI is shrinking faster than anyone predicted, with real gains in coding, document understanding, and agentic workflows.

But that's just the opener. We're also tracking how Nvidia's iron grip on China's AI chip market has crumbled from 95% to roughly 55%, why a helium shortage triggered by the Iran conflict could quietly choke the entire AI supply chain, and how PrismML just squeezed an 8-billion-parameter model into barely one gigabyte, small enough to run on your phone.

Plus, we've got OpenAI's record-shattering $122 billion raise, the American Heart Association going to war with the Trump administration's nutrition guidelines, and Google finally releasing Gemma 4. Grab your coffee — this one's packed.

All the best,

Kim Isenberg

📉 Nvidia Loses Ground In China

China’s AI chip market is rapidly shifting, with domestic players capturing 41% share and shipping 1.65 million GPUs in 2025, cutting deeply into Nvidia’s dominance, now down to ~55% from 95% pre-sanctions. Huawei leads the local surge with nearly 20% market share, fueled by aggressive innovation and government-backed demand for homegrown chips.

Despite still leading technologically, Nvidia faces a tough road ahead as policy pressure and rising domestic competition reshape the market, signaling a long-term power shift in global AI hardware.

🚀 PrismML Shrinks AI Dramatically Further

PrismML has introduced its 1-bit Bonsai models, compressing an 8.2B-parameter LLM into just 1.15 GB while maintaining performance comparable to leading 8B models. By focusing on “intelligence density,” the company claims roughly a 10x improvement in capability per GB, enabling fast, efficient AI that can run directly on devices like iPhones, Macs, and GPUs with significantly lower energy use.

The shift opens the door to AI that runs locally, faster, more private, and independent of constant cloud access. With additional 4B and 1.7B models, PrismML is pushing toward a future where powerful AI is embedded across everyday devices, from personal assistants to enterprise systems and robotics.

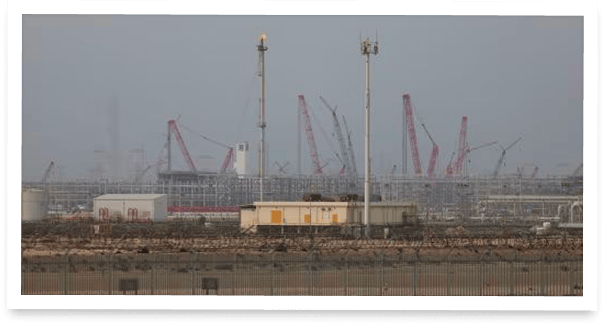

🚨 Helium Crisis Threatens AI Industry

The conflict with Iran has disrupted Qatar’s helium exports - responsible for ~33% of global supply - triggering shortages, price spikes (over 2×), and supply rationing across industries. This rare gas is mission-critical for cooling AI chip manufacturing, MRI machines, and aerospace systems, with no easy substitutes in many applications.

With damage potentially taking up to 5 years to repair and logistics blocked via the Strait of Hormuz, the crisis exposes a major vulnerability in global tech infrastructure, forcing countries like South Korea to scramble for alternative supply while companies brace for cascading impacts.

The long-awaited Gemma 4 is finally here. Small models are the future, insofar as SMLs are on the edge and the next big thing.

OpenAI President Greg Brockman: AI Self-Improvement, The Superapp Bet, Path To AGI, Scaling Compute with Alex KantrowitzView Tweet

Qwen3.6-Plus Targets Real-World Agents

The Takeaway

👉 Qwen3.6-Plus scores 78.8 on SWE-bench Verified, strong progress from 76.2, but still 2 points behind Claude Opus 4.6 (80.8). The blog compares against last-gen Opus 4.5, not the current flagship.

👉 On Terminal-Bench 2.0, Qwen's 61.6 topped Opus 4.5 but falls well short of Opus 4.6 (74.7) and Gemini 3.1 Pro (78.4) , the actual current leaders.

👉 Where Qwen genuinely leads: document understanding (91.2 OmniDocBench), multimodal perception, and several planning benchmarks , strengths that matter for real-world agent workflows beyond pure coding.

👉 The preserve_thinking API feature and 1M context window signal Alibaba is building serious infrastructure for agentic use cases, even if raw benchmark leadership remains with Anthropic and Google.

Alibaba just shipped a model that wants to prove China can compete at the frontier of agentic AI. Qwen3.6-Plus, now live via API, is the Qwen team's most ambitious release yet, and it's making real progress, even if it hasn't reached the top.

The numbers tell an honest story. On SWE-bench Verified, the gold standard for real-world code repair, Qwen3.6-Plus scores 78.8 — a solid jump from its predecessor's 76.2, but still trailing Claude Opus 4.6 at 80.8. Notably, Qwen's own benchmarks compare against Opus 4.5, not the current flagship. On Terminal-Bench 2.0, Qwen claims 61.6 — which topped Opus 4.5's 59.3, but Opus 4.6 has since surged to 74.7, and Gemini 3.1 Pro leads the pack at 78.4. Where Qwen genuinely shines is breadth: it leads on document understanding, scores competitively on math and multilingual tasks, and ships a 1M context window with a new preserve_thinking feature for agentic workflows.

The gap between Chinese and US Frontier Labs is narrowing noticeably. The race for the best models is intensifying dramatically; it remains exciting!

Why it matters: Alibaba is narrowing the distance to the world's best coding models faster than most expected, putting genuine competitive pressure on pricing, access, and the pace of innovation across the entire AI industry.

Sources:

🔗 https://qwen.ai/blog?id=qwen3.6

Your AI shouldn't grade its own homework.

Claude Code writes beautiful code. So does Codex. But here's the thing, they also think they write beautiful code. And when you ask an AI to review code it just wrote, you get the intellectual equivalent of a student grading their own exam. Shockingly, they always pass.

CodeRabbit CLI plugs into Claude Code and Codex as an external reviewer, different AI Agent, different architecture, 40+ static analyzers and zero emotional attachment to the code it's looking at. The agent writes, CodeRabbit reviews, and the agent fixes. Loop until clean.

You show up when there's actually something worth approving.

One command. Autonomous generate-review-iterate cycles. The AI still does the work. It just doesn't get to decide if the work is good anymore.

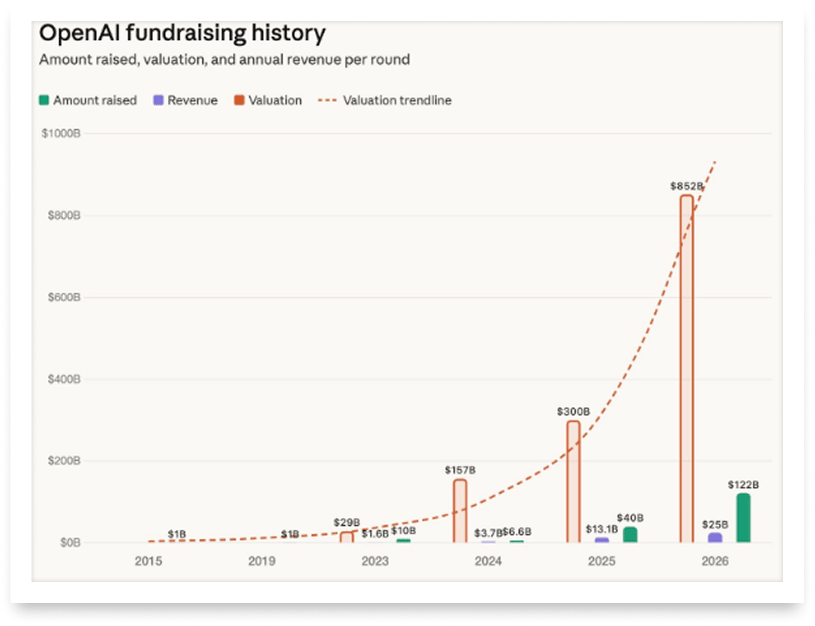

This OpenAI round is unprecedented: $122B raised with strategic megadeals tying Amazon ($50B, plus a $100B AWS partnership), NVIDIA ($30B, securing GPU dominance), and SoftBank ($30B, co-building Stargate), effectively locking in infrastructure, compute, and distribution at massive scale.

AHA Challenges Trump's Nutrition Playbook

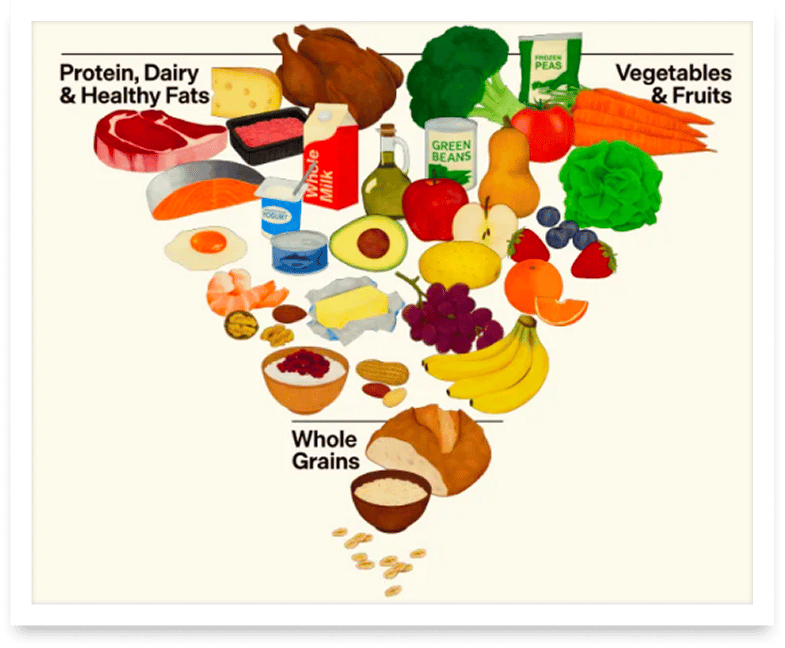

America's most influential heart health organization just drew a line in the sand, and it runs right through the steak on your plate ;) The American Heart Association released its updated 2026 dietary guidance this week, and its message couldn't be clearer: eat more plants, less meat.

The updated recommendations build on the AHA's previous 2021 guidance, now placing even stronger emphasis on plant-based proteins, whole grains, fruits, and vegetables, while urging Americans to swap full-fat dairy for low-fat alternatives and cut back on ultra-processed foods. This puts the nation's oldest heart health organization in direct tension with the Trump administration's dietary guidelines, which prominently feature red meat, full-fat dairy, and beef tallow as recommended choices.

The AHA says that early and consistent adoption of healthy eating patterns could help prevent up to 80% of heart disease and stroke, making this more than a food fight. It's a public health showdown between science-backed medical guidance and politically shaped dietary policy. Cardiovascular disease now affects more than half of American adults, and what gets recommended in schools, hospitals, and federal food programs shapes millions of daily meals.

The AHA's guidance directly contradicts key federal dietary recommendations, creating a split that will influence school meal programs, public health campaigns, and consumer choices. With heart disease remaining America's leading killer, the stakes of this nutritional tug-of-war couldn't be higher.

Most coverage tells you what happened. Fintech Takes is the free newsletter that tells you why it matters. Each week, I break down the trends, deals, and regulatory shifts shaping the industry — minus the spin. Clear analysis, smart context, and a little humor so you actually enjoy reading it. Subscribe free.