In Today’s Issue:

💰 Big Tech commits $700 billion to AI infrastructure in 2026 as Google

🧩 Cursor shifts from an AI coding IDE to a programmable agent infrastructure platform

🧌 OpenAI reveals how a quirky "Nerdy" personality prompt accidentally caused its models to obsess over goblins.

🧬 Anthropic's Claude solves complex biology problems

✨ And more AI goodness…

Dear Readers,

Four hyperscalers reported earnings on the same day, every single one beat expectations, and together they just committed nearly $700 billion to building AI infrastructure this year alone, more than the GDP of most countries. That number sets the tone for today's issue: we break down what Alphabet, Microsoft, Meta, and Amazon revealed about the economics of the AI arms race, why markets rewarded some and punished others, and what Google Cloud's backlog doubling to $460 billion in a single quarter tells us about where enterprise demand is actually heading.

Beyond the earnings frenzy, Mistral is making a bold play to become Europe's last standing frontier lab with a 128B dense model and cloud coding agents, Cursor is quietly pivoting from IDE to programmable agent platform, and OpenAI published a surprisingly revealing investigation into why its models kept calling everything a "goblin," a funny story on the surface but a genuinely important lesson in how small training incentives can reshape model behavior across generations.

We also dig into Anthropic's new BioMysteryBench, where Claude solved 30% of biology problems that five PhD experts couldn't crack, and Demis Hassabis lays out why he thinks agents, not chatbots, are the real path to AGI. Grab your coffee, this one is packed.

All the best,

Kim Isenberg

🧩 Cursor Bets On Agent Infrastructure

Cursor’s SDK release shows a shift from being just an AI coding IDE toward becoming a programmable agent platform. The interesting play is economic as much as technical: by letting teams run Cursor agents in CI/CD pipelines, internal tools, cloud VMs, and third-party products, Cursor can monetize usage through token-based compute rather than relying mainly on seat-based IDE adoption.

This turns Cursor’s agent runtime into infrastructure, not merely a developer interface. If customers embed these agents into automated workflows, usage can scale far beyond human editor sessions, making the SDK a credible platform move and a smart path toward deeper enterprise lock-in.

🇪🇺 Mistral Sharpens Europe’s AI Bet

The only european AI company thats left, Mistral, released Mistral Medium 3.5, a new 128B dense open-weights model that now powers Le Chat, Vibe remote coding agents, and the new Work mode for longer, multi-step tasks. On paper, the headline is coding agents moving to the cloud: developers can spin up sessions from the CLI or Le Chat, let them run in parallel, and come back to a branch or draft PR instead of watching every step.

But the more interesting story is the positioning. Mistral is not really trying to look like GPT or Gemini here; it is carving out a lane as the non-US, non-Chinese frontier lab with a very European enterprise pitch: open weights, predictable instruction-following, and reliable tool use in production. At 128B dense, Medium 3.5 makes a different tradeoff from the Chinese MoE models in the chart, favoring consistency over inference efficiency, and that says a lot about who Mistral thinks its real customer is.

🧌 Goblins Reveal Training Side Effects

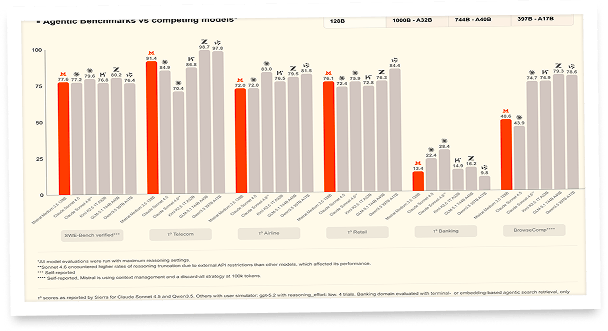

OpenAI’s investigation into its models’ growing fondness for “goblin,” “gremlin,” and other creature metaphors found the habit was not random internet residue, but a side effect of training for the now-retired Nerdy personality. That personality rewarded playful, strange, self-aware language, and the reward system appears to have unintentionally favored outputs with creature-based metaphors.

What began as a funny verbal quirk became a useful case study in how small incentives can scale across model generations. The most revealing detail is that the behavior did not stay neatly contained: even when the Nerdy prompt was absent, the goblin-like style still transferred into broader model behavior through reinforcement learning, supervised fine-tuning data, and repeated model-generated examples.

OpenAI says it responded by retiring the Nerdy personality, removing the goblin-affine reward signal, filtering creature-word training data, and adding mitigation instructions for GPT-5.5 in Codex. Beneath the humor, the piece reads as a reminder that model personality is not just surface polish, it can reshape deeper behavioral patterns in ways researchers only notice once the “goblins” start multiplying.

Demis Hassabis argues that the next phase of AI will be defined by agents with memory, continual learning and stronger reasoning, but the biggest upside is not just automation, it is AI becoming the core engine for new scientific breakthroughs, from virtual cells to AGI-era discovery.

Demis Hassabis, Google DeepMind CEO: “Continual learning, long-term reasoning and some aspects of memory are still unsolved. I think all of these will be required for AGI. Depending on your AGI timeline — mine is around 2030 — if you start a deep tech journey today, you have to assume that AGI may appear in the middle of that journey. That is not necessarily bad, but you have to take it into account. To get to AGI, you need an active system that can actively solve problems for you. Agents are that path, and I think we are just getting started.”

Big Tech's $700B AI Bet Pays Off

The Takeaway

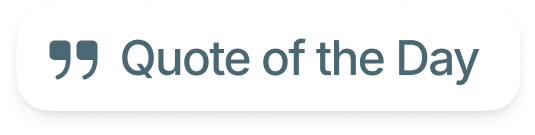

👉 All four hyperscalers beat Q1 2026 revenue and EPS estimates, powered by accelerating cloud and AI demand: Google Cloud grew 63%, Azure 40%, AWS 28%, and Meta's ad revenue surged 33%.

👉 Combined 2026 capex guidance now approaches $700 billion across Alphabet, Microsoft, Meta, and Amazon, roughly doubling 2025 levels, with rising memory chip prices adding tens of billions in unexpected costs.

👉 Markets rewarded revenue growth but punished spending uncertainty: Alphabet jumped 7% after hours on a $460 billion cloud backlog, while Meta dropped 6% after raising capex guidance to $145 billion and reporting a decline in daily active users.

👉 The core tension is clear: every company reported being compute-constrained with demand outstripping supply, yet investors are increasingly questioning when this unprecedented infrastructure build will translate into proportional profit growth.

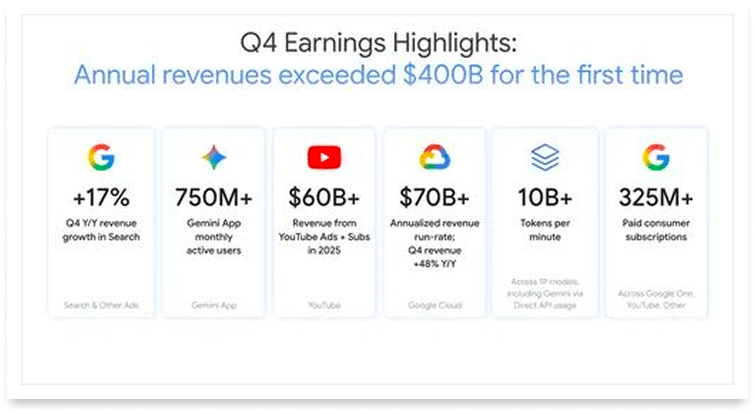

All four tech giants reported Q1 2026 earnings on the same day, and every single one beat Wall Street expectations. Alphabet led the pack with $109.9 billion in revenue, up 22% year over year, as Google Cloud exploded 63% to cross $20 billion for the first time. Microsoft posted $82.9 billion in revenue with Azure growing 40%, while Meta surged 33% to $56.3 billion in revenue and Amazon hit $181.5 billion with AWS growing 28%, its fastest pace in 15 quarters.

But here's the number that shook markets: combined 2026 capex across the four hyperscalers is on track to exceed $650 billion. Alphabet raised its full year 2026 capex guidance to $180 billion to $190 billion, Microsoft guided $190 billion for calendar year 2026, and Meta bumped its range to $125 billion to $145 billion. Amazon's capex reached $44.2 billion in Q1 alone. The revenue beats were massive, but so was the market's anxiety: Meta slid 6% and Microsoft dropped 2.5% after hours, even as Alphabet shares rose 7% in after-hours trading, on course to open at a record market value.

The AI infrastructure arms race is no longer theoretical. It's the biggest corporate spending wave in history, and the question now is whether revenue growth can keep outrunning the cost of building the future.

Why it matters: The hyperscalers are collectively spending more on AI infrastructure than the GDP of most nations, fundamentally reshaping the global economy around compute. Whether this bet generates returns proportional to its scale will define tech investing for the next decade.

Sources:

🔗 https://www.cnbc.com/2026/04/29/alphabet-googl-q1-2026-earnings.html

🔗 https://www.cnbc.com/2026/04/29/amazon-amzn-q1-earnings-report-2026.html

Your first HR system, implemented right

Rolling out your first HR tool? Get a step-by-step guide to avoid common mistakes, drive adoption, and build a scalable HR foundation.

Google Cloud's backlog nearly doubled in a single quarter, jumping from $240 billion to $460 billion, adding more in contracted commitments in three months than the entire backlog accumulated in its first four years combined. This isn't hype anymore, it's half a trillion dollars in signed contracts from enterprises racing to lock in AI compute before capacity runs out.

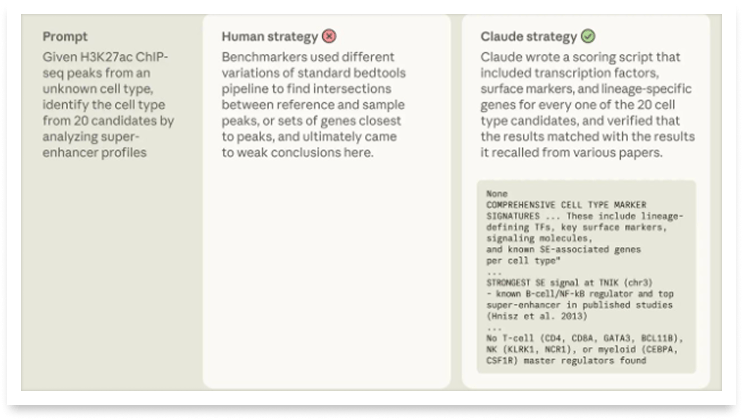

When Machines Outsmart PhD Biologists

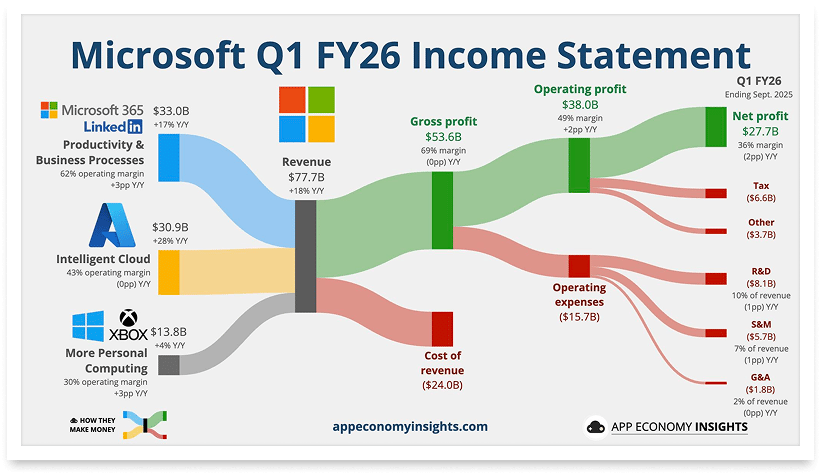

Anthropic just dropped a benchmark that should make every scientist pay attention. BioMysteryBench puts AI models through 99 real bioinformatics challenges, using raw, messy datasets from actual research, think unprocessed DNA sequences and clinical samples. The twist: these aren't textbook problems with neat answers. They're the kind of open-ended puzzles that keep PhD students up at night.

The results are striking. Claude's latest models solve the majority of tasks that trained human experts can handle, and on 23 problems that a panel of five domain experts couldn't crack, Claude Mythos Preview nailed 30% of them. How? By combining knowledge from hundreds of thousands of papers and layering multiple analytical strategies when uncertain, essentially doing what a room full of specialists would do, but faster and in a single run.

Genentech and Roche independently confirmed this trajectory with their own CompBioBench, where Claude Opus 4.6 reached 81% overall accuracy and 69% on the hardest questions. Two separate benchmarks, same conclusion: AI is no longer just keeping pace with biologists, it's pulling ahead on some of the hardest problems.

AI models are crossing a threshold where they reliably match, and sometimes exceed, human expert performance on real biological data analysis. This could dramatically accelerate drug discovery, genomics research, and our understanding of disease.

The summit for marketers who are tired of guessing.

Search has changed more in the last 18 months than the previous decade. Most teams are still running yesterday's playbook.

The Agentic Marketing Summit changes that. 3x Inc 5000 founder and search expert Manick Bhan breaks down the exact science behind getting found online.

Real Experts. Live. One week only.