In Today’s Issue:

🤑 Meta inks a massive 6-gigawatt deal with AMD to power its next-generation AI infrastructure.

💵 ASML unveils a 1,000W EUV lithography breakthrough

📈 Qwen 3.5 Medium proves that smarter architecture can beat brute-force scaling with a 7x efficiency leap.

📉 Inception's Mercury 2 shatters speed limits by using diffusion to generate text at 1,000 tokens per second.

✨ And more AI goodness…

Dear Readers,

If 3 billion active parameters can already outperform 22 billion, where exactly does the efficiency curve end? Alibaba's Qwen 3.5 Medium series just rewrote the rules of AI scaling, and it's only the beginning of today's issue.

We're also looking at how ASML is quietly supercharging the global chip supply with a 1,000W EUV breakthrough that could mean 50% more chips by 2030, why Meta is betting gigawatts, literally, on AMD to power its AI future, and how Inception's Mercury 2 is generating text at 1,000 tokens per second using a fundamentally different architecture borrowed from image generators like Midjourney.

Oh, and Anthropic's Claude Code just learned to follow you from your desk to your phone. The AI world moved fast this week, so let's break it all down.

All the best,

🚀 Meta AMD Power AI Future

Meta Platforms has signed a multiyear agreement with Advanced Micro Devices to deploy up to 6 gigawatts of AMD Instinct GPUs, massively scaling its AI infrastructure. Shipments begin in the second half of 2026, powered by the Helios rack-scale architecture, as Meta pushes toward personal superintelligence and diversifies beyond a single chip supplier.

This deal strengthens Meta’s compute resilience, integrates silicon-to-software roadmaps, and positions AMD at the center of one of the largest AI infrastructure buildouts globally. For professionals, the key takeaway is clear: the AI arms race is accelerating, and infrastructure scale, measured in gigawatts, is the new competitive benchmark.

🚀 ASML Supercharges Chip Production

ASML has unveiled a breakthrough in its EUV lithography machines, boosting light source power from 600W to 1,000W, a leap that could enable up to 50% more chips by 2030.

The upgrade may allow machines to process 330 wafers per hour (up from 220), cutting chip costs and strengthening ASML’s edge as the sole commercial EUV supplier amid U.S. and China tech rivalry. With a roadmap toward 1,500W and even 2,000W, ASML is signaling long-term dominance in advanced chip manufacturing.

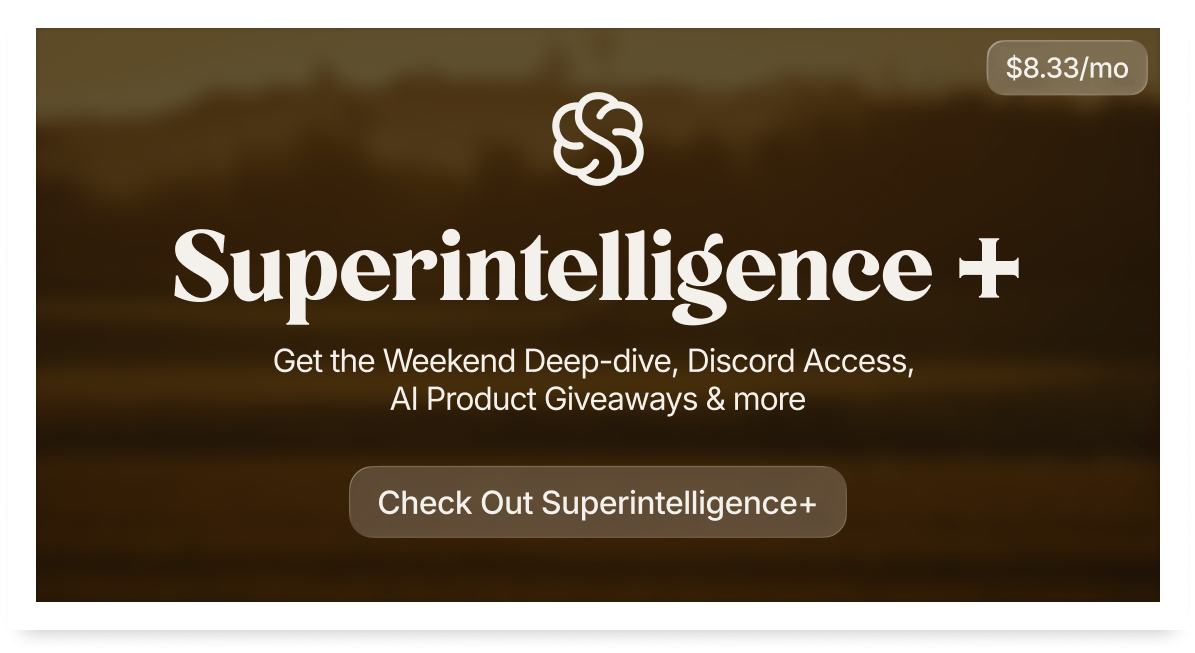

💻 Claude Code launches Remote Control

Anthropic has introduced a new “Remote Control” feature for Claude Code, designed to make AI-assisted coding more flexible across devices.

Developers can now start a task directly from their terminal and continue managig it from their phone, whether they’re commuting, walking between meetings, or away from their desk. Claude continues running locally on the user’s machine, while the session can be monitored and controlled via the Claude mobile app.

The update signals a broader shift toward persistent, cross-device AI workflows, where coding sessions don’t end when you close your laptop, but instead follow you wherever you are.

The AI Tsunami is Here & Society Isn't Ready | Dario Amodei x Nikhil Kamath

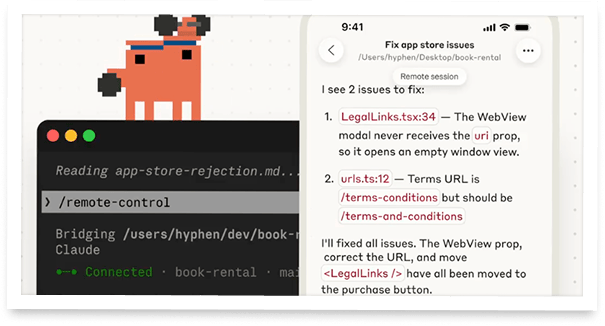

Qwen 3.5 Medium Redefines Efficiency

The Takeaway

👉 Qwen3.5-35B-A3B outperforms its 235B predecessor with just 3B active parameters — a 7x efficiency gain that challenges the industry's obsession with scaling up.

👉 Qwen3.5-Flash ships with 1M context length and native tool support, making it production-ready for agentic workflows without complex RAG setups.

👉 The 122B-A10B and 27B models are closing the gap between open-weight mid-size models and proprietary frontier systems, particularly in multi-step agent tasks.

👉 For teams evaluating AI infrastructure in 2026, this release signals that smarter architecture and RL can deliver frontier performance at a fraction of the compute and cost.

Alibaba just dropped a bombshell on the AI world - and it's not what you'd expect. Instead of going bigger, they went smarter. The new Qwen 3.5 Medium Model Series proves that raw parameter count is no longer king. The star of the show? Qwen3.5-35B-A3B, a model that activates just 3 billion parameters per inference pass - and yet outperforms its own predecessor, the Qwen3-235B-A22B, which relied on 22 billion active parameters.

That's a 7x efficiency improvement. Think of it like a sports car that beats a monster truck in a race while burning a fraction of the fuel.

The series also includes the 122B-A10B and 27B variants, both closing the gap between mid-sized and frontier models, especially in complex agentic workflows. And then there's Qwen3.5-Flash: the production-ready version of the 35B-A3B, shipping with a massive 1-million-token context window and native built-in tool support right out of the box. No hacking, no workarounds: just plug and build.

For developers and researchers, this is a paradigm shift. Better architecture, higher data quality, and smarter reinforcement learning are now beating brute-force scaling. So here's the exciting question: If 3 billion active parameters can already outperform 22 billion, where does the efficiency curve go from here?

Why it matters: Alibaba's Qwen 3.5 Medium series demonstrates that the future of AI isn't about stacking more parameters, it's about using them more intelligently. This shift toward architectural efficiency could make frontier-level AI accessible to far more developers and organizations around the world.

Sources:

🔗 https://x.com/Alibaba_Qwen/status/2026339351530188939

Meet America’s Newest $1B Unicorn

A US startup just hit a $1 billion private valuation, joining billion-dollar private companies like SpaceX, OpenAI, and ByteDance. Unlike those other unicorns, you can invest.

Over 40,000 people already have. So have industry giants like General Motors and POSCO.

Why all the interest? EnergyX’s patented tech can recover up to 3X more lithium than traditional methods. That's a big deal, as demand for lithium is expected to 5X current production levels by 2040. Today, they’re moving toward commercial production, tapping into 100,000+ acres of lithium deposits in Chile, a potential $1.1B annual revenue opportunity at projected market prices.

Right now, you can invest at this pivotal growth stage for $11/share. But only through February 26. Become an early-stage EnergyX shareholder before the deadline.

This is a paid advertisement for EnergyX Regulation A offering. Please read the offering circular at invest.energyx.com. Under Regulation A, a company may change its share price by up to 20% without requalifying the offering with the Securities and Exchange Commission.

Diffusion Rewrites the Speed Game

The AI world just got a serious wake-up call. Inception's Mercury 2 is here, and it's not playing by the same rules as everyone else.

While every major AI lab (OpenAI, Anthropic, Google) builds models that generate text one token at a time, Mercury 2 does something fundamentally different. Think of it like the difference between painting a portrait stroke by stroke versus developing a photo: Mercury 2 starts with a rough sketch of the full answer and refines it in parallel through a process called diffusion, the very same architecture powering image generators like Midjourney and Sora.

The result? A staggering 1,000 tokens per second, compared to roughly 89 tokens per second for Claude 4.5 Haiku and just 71 for GPT-5 Mini. That's not a minor improvement. That's a category leap.

What makes this genuinely exciting for the AI community is that this speed doesn't come from custom chips or hardware tricks, it runs on standard NVIDIA GPUs. The advantage is architectural, meaning it scales wherever AI runs today.

For real-world applications, think real-time voice assistants, multi-step AI agents, or instant coding tools, this kind of responsiveness could finally make AI feel less like a search engine and more like a thought partner.

Mercury 2 challenges the architectural consensus that has dominated AI for years — if diffusion-based models can match quality while delivering 10x the speed, the entire inference economics of the industry could shift. For developers and enterprises, this means AI agents and real-time applications that were previously too slow or expensive to deploy could become viable at scale.

Stop typing prompts. Start talking.

You think 4x faster than you type. So why are you typing prompts?

Wispr Flow turns your voice into ready-to-paste text inside any AI tool. Speak naturally - include "um"s, tangents, half-finished thoughts - and Flow cleans everything up. You get polished, detailed prompts without touching a keyboard.

Developers use Flow to give coding agents the context they actually need. Researchers use it to describe experiments in full detail. Everyone uses it to stop bottlenecking their AI workflows.

89% of messages sent with zero edits. Millions of users worldwide. Available on Mac, Windows, iPhone, and now Android (free and unlimited on Android during launch).